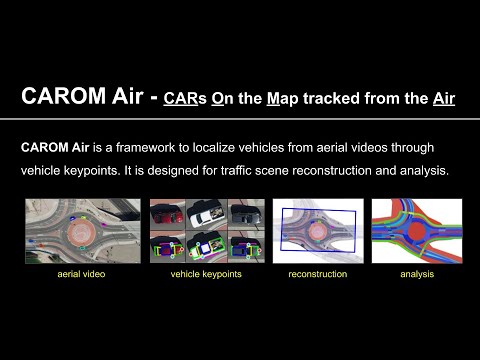

CAROM Air means "CARs On the Map tracked from the Air". It is our recent work to track and localize vehicles using aerial videos taken by drones. It is designed for traffic scene reconstruction and analysis. See the following short demo video. Our research paper is going to be published at ICRA 2023, and our dataset will be made openly available once it is published (somewhere in May, 2023).

More detailed demo videos for qualitative evaluation are shown as follows.

- Keypoints and masks

- Tracking evaluation

- Model fitting evaluation

- Localization evaluation

- Track 3a (Vehicle A, seven passes: 0:09, 3:08, 4:50, 5:58, 7:39, 10:34, 14:14)

- Track 3b (Vehicle A, eight passes: 0:00, 2:30, 3:36, 5:01, 6:41, 11:04, 12:08, 13:34)

- Track 3c (Vehicle A, five passes: 0:12, 5:14, 8:56, 10:00, 12:00)

- Track 3d (Vehicle A, four passes: 0:00, 1:30, 4:30, 5:32)

- track 3e (Vehicle B, four passes: 1:34, 6:14, 10:12, 12:35)

- track 3f (Vehicle B, three passes: 0:53, 4:10, 6:22)

- track 3g (Vehicle B, six passes: 1:49, 4:54, 8:17, 10:56, 15:01, 17:30)

- track 3h (Vehicle B, seven passes: 2:28, 3:50, 7:38, 8:41, 12:47, 14:19, 17:13)

- track 3i (Vehicle B, four passes: 0:28, 4:14, 6:29, 8:47)

- Dataset demo

-

Duo Lu, Eric Eaton, Matt Weg, Wei Wang, Steven Como, Jeffrey Wishart, Hongbin Yu, Yezhou Yang, "CAROM Air - Vehicle Localization and Traffic Scene Reconstruction from Aerial Video", IEEE International Conference on Robotics and Automation (ICRA), 2023.

-

Siddharth Das, Prabin Rath, Duo Lu, Tyler Smith, Jeffrey Wishart, Hongbin Yu, "Comparison of Infrastructure-and Onboard Vehicle-Based Sensor Systems in Measuring Safety Metrics", SAE WCX Digital Summit, 2023.

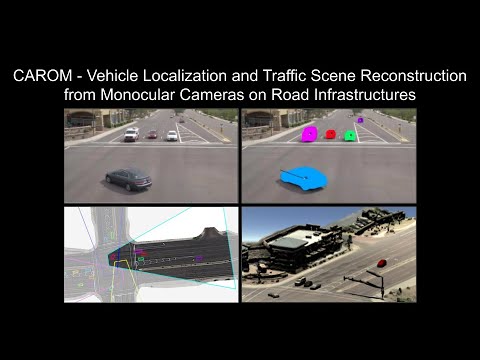

CAROM means "CARs On the Map". It is a framework to track and localize vehicles using monocular traffic monitoring cameras on road infrastructures. The tracking results can be stored in files or in a database as records. Using the results, a traffic scene can be reconstructed and replayed on a map. See the following short demo video, a presentation, and a preprint version of our paper on ICRA 2021 and a more detailed paper on our work about the infrastructure.

Through collaborating with the Maricopa County Department of Transportation (MCDOT) in Arizona, US, we constructed a small benchmarking dataset containing GPS data, infrastructure based camera videos, and drone videos to validate the vehicle tracking results. On average, the localization error is approximately 0.8 m and 1.7 m on average within the range of 50 m and 120 m from the cameras, respectively.

More detailed demo videos for qualitative evaluation are shown as follows.

- Demo videos at site 1A (Eastbound of the intersection, Anthem, AZ, ~10 minutes)

- Demo videos at site 1B (Northbound of the intersection, Anthem, AZ, ~10 minutes)

- Demo videos at site 1C (Westbound of the intersection, Anthem, AZ, ~10 minutes)

- Demo videos at site 1D (Southbound of the intersection, Anthem, AZ, ~10 minutes)

- Demo videos at site 2 (East Osborn Road, Scottsdale, AZ, ~10 minutes)

- Validation with aerial videos at site 2 (East Osborn Road, Scottsdale, AZ)

- Demo on four view fusion at site 1 (Detailed slow motion, Anthem, AZ)

- Demo on speed estimation at site 1 (Detailed slow motion, Anthem, AZ)

- Install cuda v11 toolkit

- Install Anaconda link

- Install Intel Distribution for Python following instructions on link

- Activate Intel Distribution for Python (idp):

conda activate idp - Install Python dependencies

pip3 install -r requirements.txtto install required dependencies - Add

export PYTHONPATH=$PYTHONPATH:/project_rootto~/.bashrc

NOTE: Make sure you install an opencv-python version that was compiled with ffmpeg flag to be able to read .mpg video files.

- Download calibration files

- The project must have the following folder structure:

tracking_3d

│ README.md

| requirements.txt

│ tracking.py

| ...

| retrained_net.pth

│

└───data

│ │ video

│ │ calibration_2d

- Change the folder path in

mainfunction withintracking.pyfile to point to the data folder of the project - The tracking algorithm can be used to either extract or replay trajectories from the video. To extract tracking data, set flag

replay=Falsewithin thetest_tracker()method. To replay tracking data, set flagreplay=Truewithin thetest_trackermethod. - Choose the format of the data extracted by selecting

npyorcsvwithin thetest_trackermethod - Run

python3 tracking.py

- Duo Lu, Varun C Jammula, Steven Como, Jeffrey Wishart, Yan Chen, Yezhou Yang, "CAROM - Vehicle Localization and Traffic Scene Reconstruction from Monocular Cameras on Road Infrastructures", IEEE International Conference on Robotics and Automation (ICRA), 2021.

- Niraj Altekar, Steven Como, Duo Lu, Jeffrey Wishart, Donald Bruyere, Faisal Saleem, K Larry Head, "Infrastructure-Based Sensor Data Capture Systems for Measurement of Operational Safety Assessment (OSA) Metrics", SAE WCX Digital Summit, 2021.

- Anshuman Srinivasan, Yoga Mahartayasa, Varun Chandra Jammula, Duo Lu, Steven Como, Jeffrey Wishart, Maria Elli, Yezhou Yang, Hongbin Yu, "Infrastructure-based LiDAR Monitoring for Assessing Automated Driving Safety", SAE WCX Digital Summit, 2022.

- Jeffrey Wishart, Yan Chen, Steven Como, Narayanan Kidambi, Duo Lu, Yezhou Yang, "Fundamentals of Connected and Automated Vehicles", SAE International, 2022.