Multi-view Neural Human Rendering (NHR) [Paper] [Project Page]

Pytorch implementation of NHR.

Multi-view Neural Human Rendering

Multi-view Neural Human Rendering

Minye Wu, Yuehao Wang, Qiang Hu, Jingyi Yu.

In CVPR 2020.

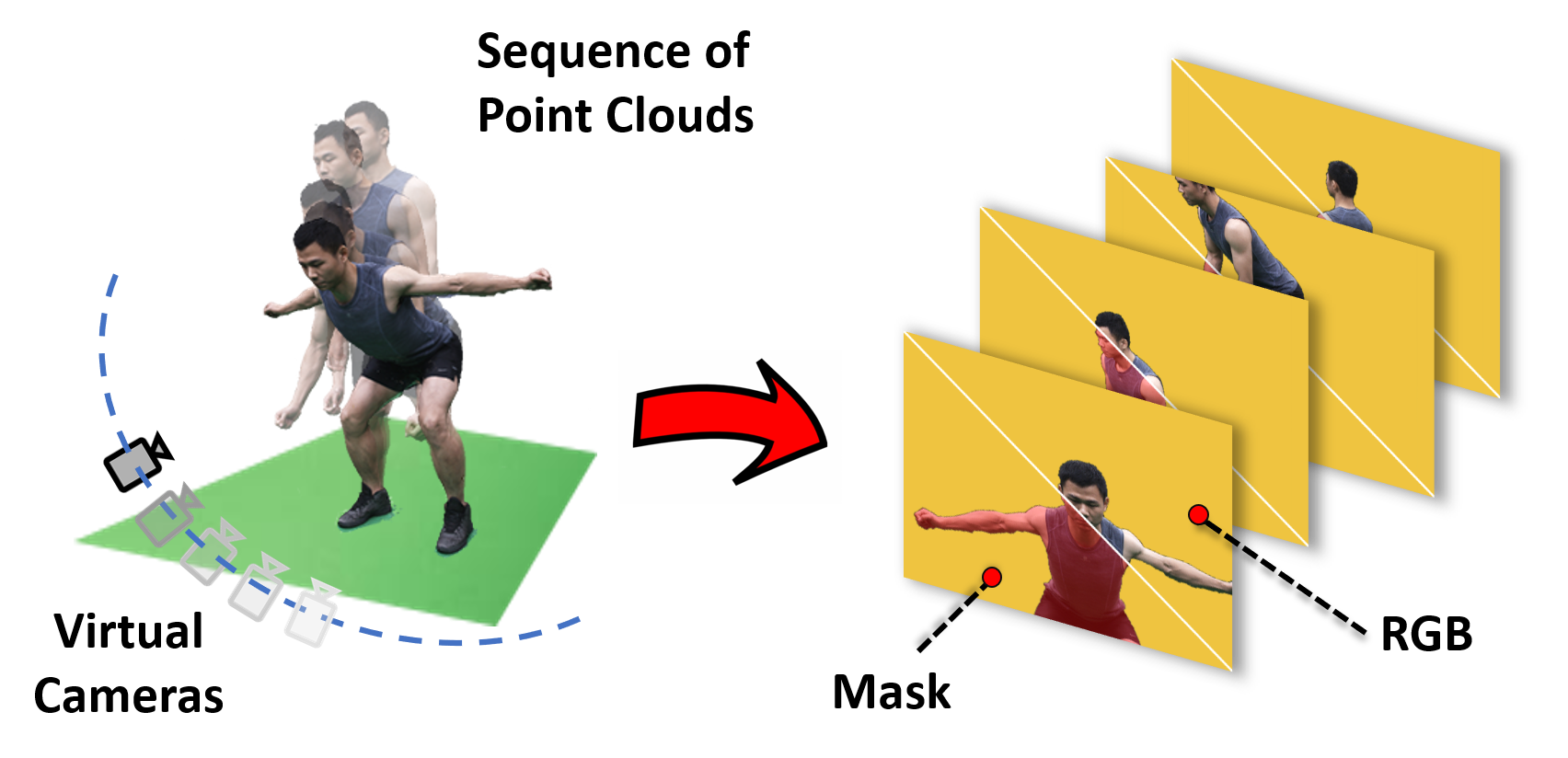

We present an end-to-end Neural Human Renderer (NHR) for dynamic human captures under the multi-view setting. NHR adopts PointNet++ for feature extraction (FE) to enable robust 3D correspondence matching on low quality, dynamic 3D reconstructions. To render new views, we map 3D features onto the target camera as a 2D feature map and employ an anti-aliased CNN to handle holes and noises. Newly synthesized views from NHR can be further used to construct visual hulls to handle textureless and/or dark regions such as black clothing. Comprehensive experiments show NHR significantly outperforms the state-of-the-art neural and image-based rendering techniques, especially on hands, hair, nose, foot, etc.

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

All material is made available under Creative Commons BY-NC-SA 4.0 license. You can use, redistribute, and adapt the material for non-commercial purposes, as long as you give appropriate credit by citing our paper and indicating any changes that you've made.

The designed architecture follows this guide PyTorch-Project-Template, you can check each folder's purpose by yourself.

Dependencies:

- python 3.6

- pytorch>=1.2

- torchvision

- opencv

- ignite=0.2.0

- yacs

- Antialiased CNNs

- Pointnet2.PyTorch

- apex

- PCPR

1.Dataset preparing A data folder with a structure like following:

.

├── img

│ └── %d - the frame number, start from 0.

│ └──mask

│ └── img_%04d.jpg - foreground mask of corresponding view. view number start from 0.

│ └──img_%04d.jpg - undistorted RGB images for each view. view number start from 0.

│

├── pointclouds

│ └── frame%d.npy - point cloud for each frame. A numpy array with a size of Nx6, where N is the size of point cloud. Each row is the "x y z r g b". The frame number start from 1.

│

├── CamPose.inf -Camera extrinsics. In each row, the 3x4 [R T] matrix is displayed in columns, with the third column followed by columns 1, 2, and 4, where R*X^{camera}+T=X^{world}.

│

└── Intrinsic.inf -Camera intrinsics. The format of each intrinsics is: "idx \n fx 0 cx \n 0 fy cy \n 0 0 1 \n \n" (idx starts from 0)

2. Network Training

- modify configure file config.yml

- start training

cd tools && python train_net.py <gpu id>

2. Network Fine-tuning

- run

cd tools && python finetune.py <gpu id> <path to checkpoint> <the number of resuming epoch>

3. Rendering

- please see tools/render.ipynb

Datasets are now released for non-commercial purposes.

Please see our project page

Now we provide camera parameter conversion code (From Metashape)

@inproceedings{wu2020multi,

title={Multi-View Neural Human Rendering},

author={Wu, Minye and Wang, Yuehao and Hu, Qiang and Yu, Jingyi},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={1682--1691},

year={2020}

}