(warning, version in the video above is outdated, but does give an idea of the workflow)

This is a forked version of the AUTOMATIC1111/stable-diffusion-webui project with some additional tweaks for my AI Tools to work.

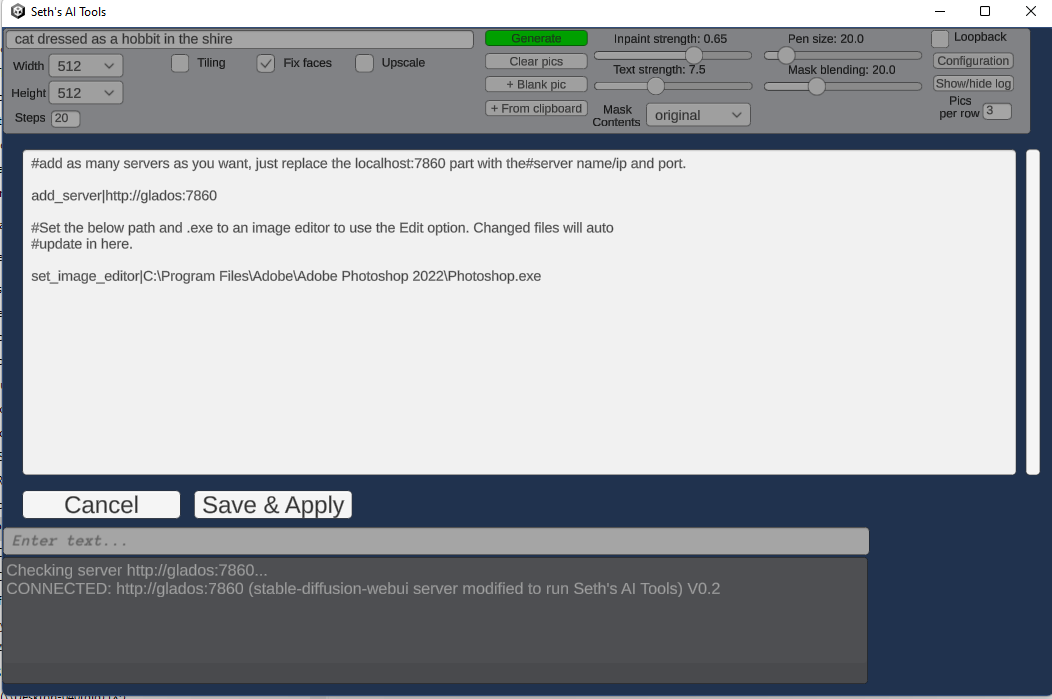

To use my native Unity-based front-end Seth's AI Tools, you need this running somewhere.

- My legacy api (txt2img, img2img, interrogate, background removal, on demand nsfw checks) can be used simultaneously with the normal web interface, AND the new partially done official api feature

- Just want the legacy api and don't care about my front end? Here's a notebook showing how to use it directly

- Also added a notebook showing how to use the new unfinished API here

- It's not a web app, it's a native .exe

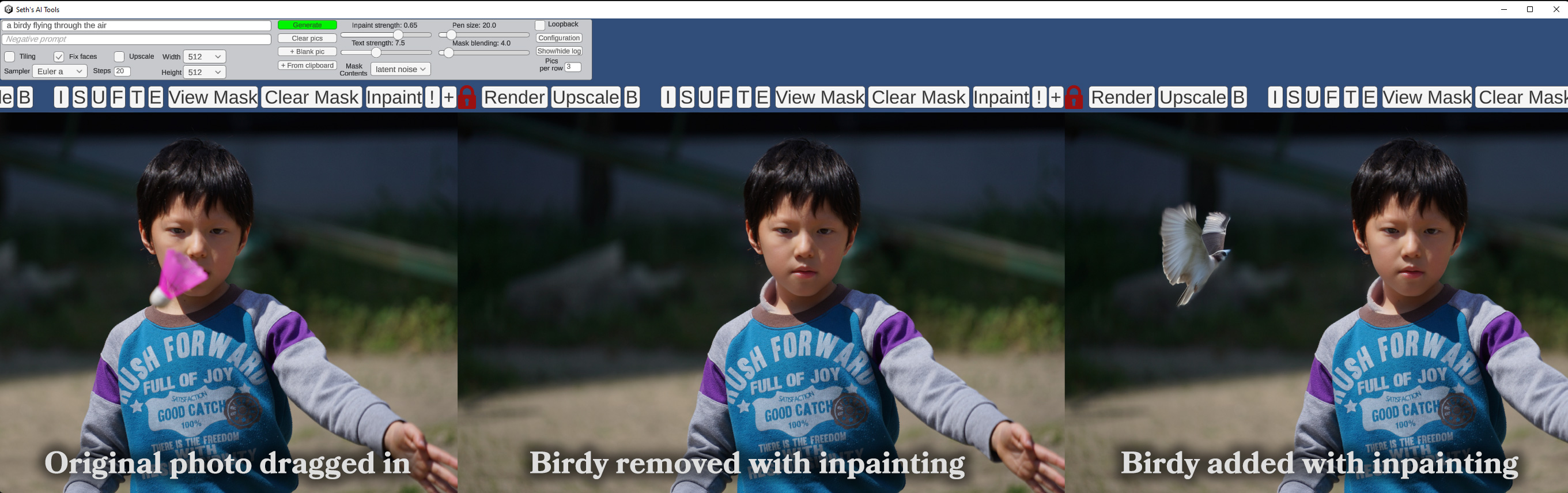

- Photoshop/image editor integration with live update

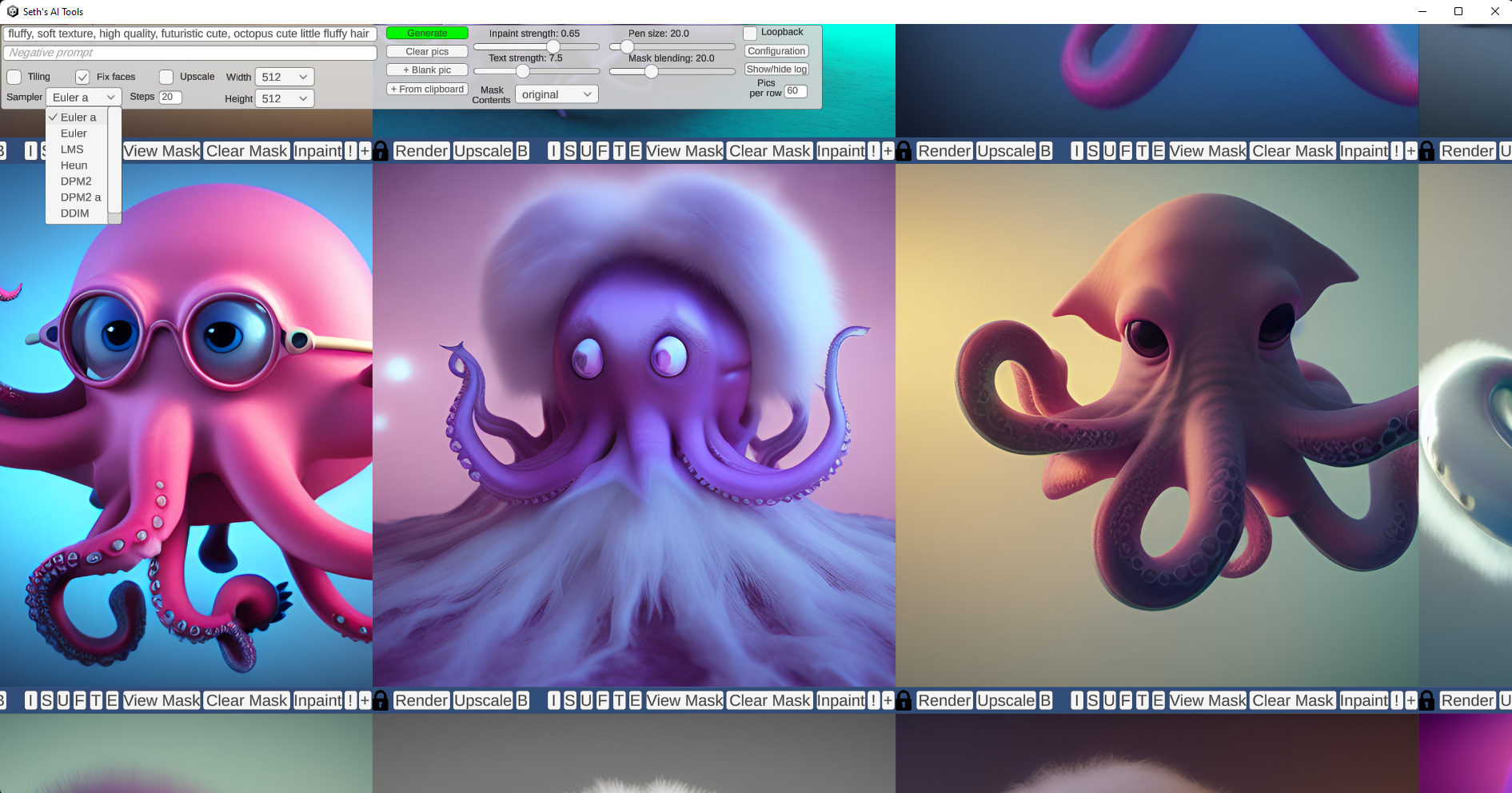

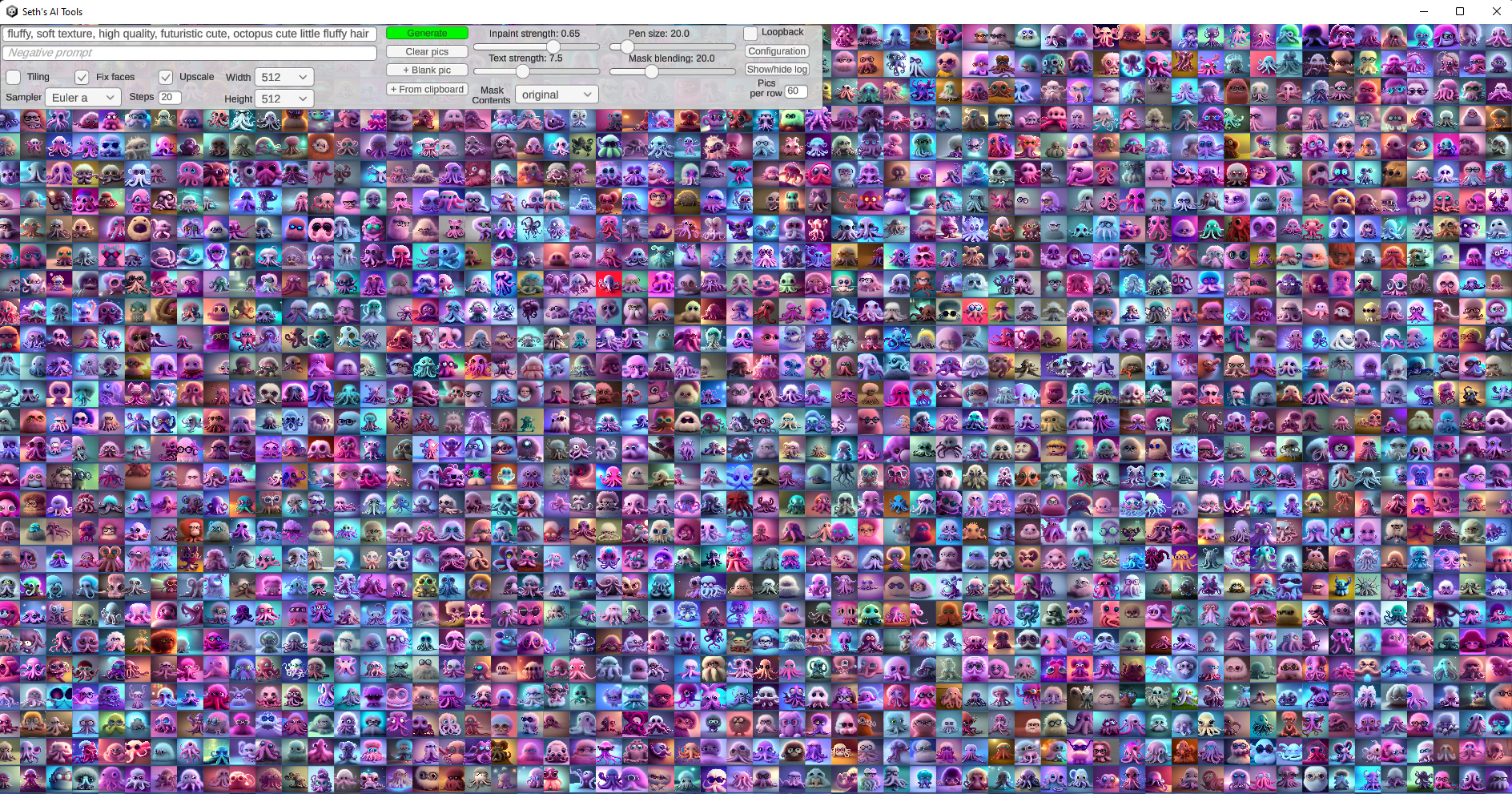

- text to image, inpainting, image interrogation, face fixing, upscaling, tiled texture generation with preview, nsfw options, alpha mask subject isolation (background removal)

- Adjustable inpaint size tool, useful for outpainting/mixing too

- Drag and drop images in as well as paste images from windows clipboard

- Pan/zoom with thousands of images on the screen

- Mask painting with controllable brush size

- Can utilize multiple servers allowing seamless use of all remote GPUs for ultra fast generation

- All open source, use the Unity game engine and C# to do stuff with AI art

- Neat workflow that allows evolving images with loopback while live-selecting the best alteratives to shape the image in real-time

Note: This repository was deleted and replaced with the AUTOMATIC1111/stable-diffusion-webui fork Sept 19th 2022, it has completely replaced the original AI Tools backend server.

- Added background removal (creates an alpha mask around the subject with ai)

- Versioned to 0.27 (requires client 0.49 to use latest features)

- Note: Now requires Python to be 3.9+, I got tired of fixing auto1111 changes to allow earlier, they may fix it later though. To upgrade python in a conda env, do "conda uninstall python" and then "conda install python=3.9"

Installation and Running (modified from stable-diffusion-webui docs)

Make sure the required dependencies are met and follow the instructions available for both NVidia (recommended) and AMD GPUs.

- Install Python 3.10.6, checking "Add Python to PATH"

- Install git.

- Download the aitools_server repository, for example by running

git clone https://github.com/SethRobinson/aitools_server.git. - Place

model.ckptin themodelsdirectory (see dependencies for where to get it). - Run

webui-user.batfrom Windows Explorer as normal, non-administrator, user.

- Install the dependencies: Note: Requires Python 3.9+!

# Debian-based:

sudo apt install wget git python3 python3-venv

# Red Hat-based:

sudo dnf install wget git python3

# Arch-based:

sudo pacman -S wget git python3- To install in

/home/$(whoami)/aitools_server/, run:

bash <(wget -qO- https://raw.githubusercontent.com/SethRobinson/aitools_server/master/webui.sh)- Place

model.ckpt(or better, use sd-v1.5-inpainting.ckpt) in the base aitools_server directory (see dependencies for where to get it). - Run the server from shell with:

python launch.py --listen --port 7860 --apiDon't have a strong enough GPU or want to give it a quick test run without hassle? No problem, use this Colab notebook. (Works fine on the free tier)

Go to its directory (probably aitools_server) in a shell or command prompt and type:

git pullVerify the server works by visiting it with a browser. You should be able to generate and paint images via the default web gradio interface. Now you're ready to use the native client.

Note The first time you use the server, it may appear that nothing is happening - look at the server window/shell, it's probably downloading a bunch of stuff for each new feature you use. This only happens the first time!

-

Download the Client (Windows, 22 MB) (Or get the Unity source)

-

Unzip somewhere and run aitools_client.exe

The client should start up. If you click "Generate", images should start being made. By default it tries to find the server at localhost at port 7860. If it's somewhere else, you need to click "Configure" and edit/add server info. You can add/remove multiple servers on the fly while using the app. (all will be utilitized simultaneously by the app)

You can run multiple instances of the server from the same install.

Start one instance:

CUDA_VISIBLE_DEVICES=0 python launch.py --listen --port 7860 --api

Then from another shell start another specifying a different GPU and port:

CUDA_VISIBLE_DEVICES=1 python launch.py --listen --port 7861 --api

Then on the client, click Configure and edit in an add_server command for both servers.

- Seth's AI Tools created by Seth A. Robinson (seth@rtsoft.com) twitter: @rtsoft - Codedojo, Seth's blog

- Highly Accurate Dichotomous Image Segmentation (Xuebin Qin and Hang Dai and Xiaobin Hu and Deng-Ping Fan and Ling Shao and Luc Van Gool)

- The original stable-diffusion-webui project the server portion is forked from

- Stable Diffusion - https://github.com/CompVis/stable-diffusion, https://github.com/CompVis/taming-transformers

- k-diffusion - https://github.com/crowsonkb/k-diffusion.git

- GFPGAN - https://github.com/TencentARC/GFPGAN.git

- CodeFormer - https://github.com/sczhou/CodeFormer

- ESRGAN - https://github.com/xinntao/ESRGAN

- SwinIR - https://github.com/JingyunLiang/SwinIR

- Swin2SR - https://github.com/mv-lab/swin2sr

- LDSR - https://github.com/Hafiidz/latent-diffusion

- Ideas for optimizations - https://github.com/basujindal/stable-diffusion

- Cross Attention layer optimization - Doggettx - https://github.com/Doggettx/stable-diffusion, original idea for prompt editing.

- Cross Attention layer optimization - InvokeAI, lstein - https://github.com/invoke-ai/InvokeAI (originally http://github.com/lstein/stable-diffusion)

- Textual Inversion - Rinon Gal - https://github.com/rinongal/textual_inversion (we're not using his code, but we are using his ideas).

- Idea for SD upscale - https://github.com/jquesnelle/txt2imghd

- Noise generation for outpainting mk2 - https://github.com/parlance-zz/g-diffuser-bot

- CLIP interrogator idea and borrowing some code - https://github.com/pharmapsychotic/clip-interrogator

- Idea for Composable Diffusion - https://github.com/energy-based-model/Compositional-Visual-Generation-with-Composable-Diffusion-Models-PyTorch

- xformers - https://github.com/facebookresearch/xformers

- DeepDanbooru - interrogator for anime diffusers https://github.com/KichangKim/DeepDanbooru

- Security advice - RyotaK

- Initial Gradio script - posted on 4chan by an Anonymous user. Thank you Anonymous user.

- (You)