This project is mainly focused on End-to-End Deep Learning for Self-Driving-Cars. Uses raw image data, a convolutional neural network, and an autoencoder for autonomous driving in Need For Speed Most Wanted (2005) v1.3 game.

Version 2.0

- Updated from tflearn to Keras API on top of tensorflow 2.0

- Updated from alexnet to new architecture.

- Using minimap also as an input, along with road images.

Link to Reddit Post: Click here

python -m pip install --user --requirement requirements.txt

All required modules (except the built-ins) are listed below.

opencv-python

numpy

psutil

pandas

sklearn

tensorflow

matplotlib

-

Visualizing Region of Interest - to make sure that we are capturing the areas that we want and nothing extra.

- Visualize screen can be used to see the area of the road that is being captured when recording training data.

- Visualize map can be used to see the area of the minimap that is located in the bottom-left corner, that is captured when recording training data.

-

Getting Training Data - capturing raw frames along with player's inputs.

- Get data is used to capture the ROI found in step 1. We capture both the road and the minimap per observation as features, along with the player's input as label.

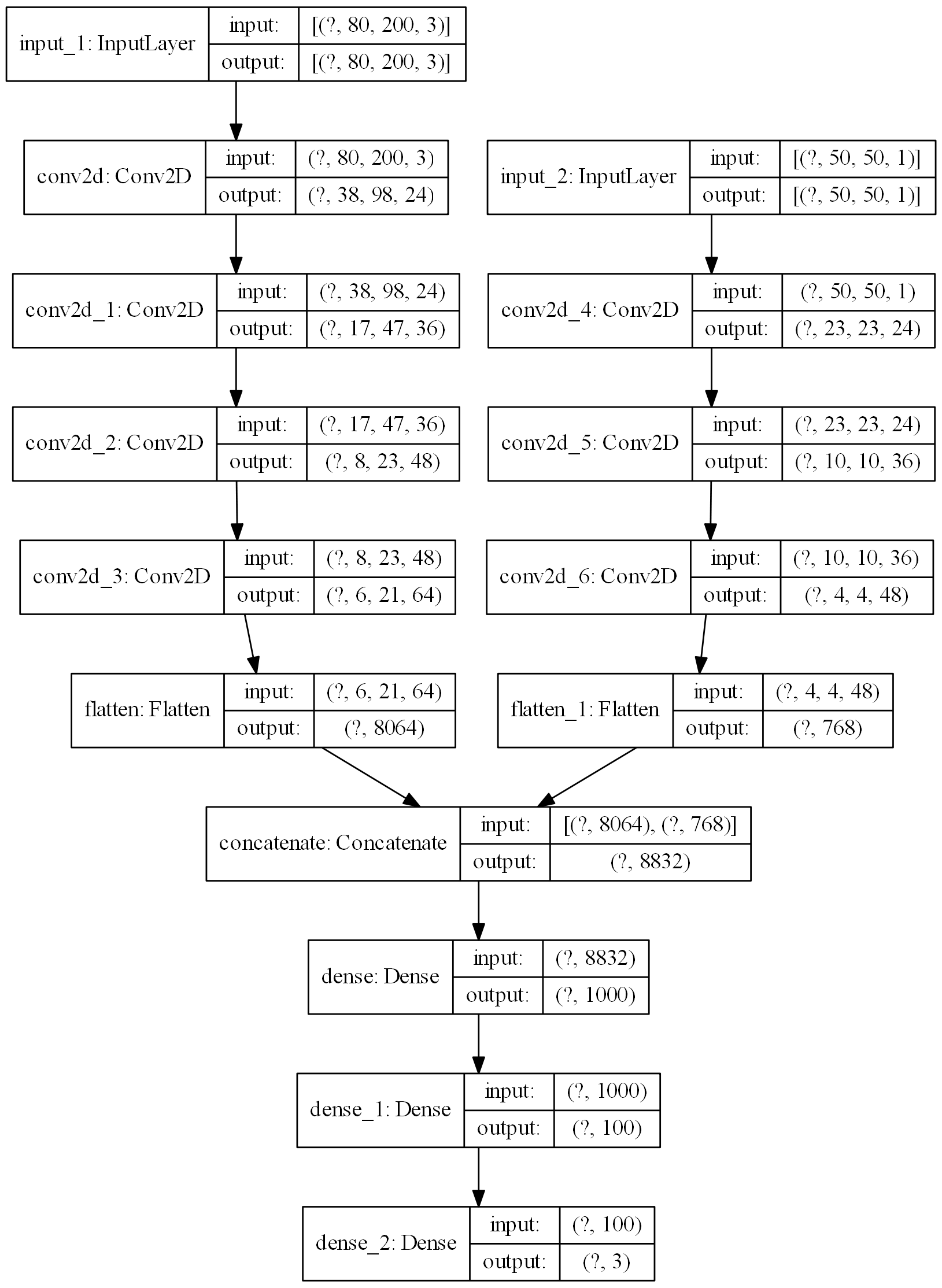

- The captured road frame is resized to (80, 200, 3)

- The captured minimap frame is resized to (50, 50, 1)

-

Balancing the data - The raw data is balanced to avoid bias.

- Balance data removes the unwanted bias in the training data.

- The raw data has most of the observations with labels for forward with few observations with labels for left or right.

- We thus discard the excess amount of unwanted data that has label as forward. Keep in mind that we do lose a lot of data.

-

Combining the data - The balanced files can now be joined together to form one final data file.

- Combine data is used to join all balanced data for easier loading of data during training process.

- All batches of balanced data is now combined together to form final_data.npy file.

- This file has 2 images as features, with shape (80, 200, 3) and (50, 50, 1), respectively, and a one-hot-encoded label with 3 classes.

-

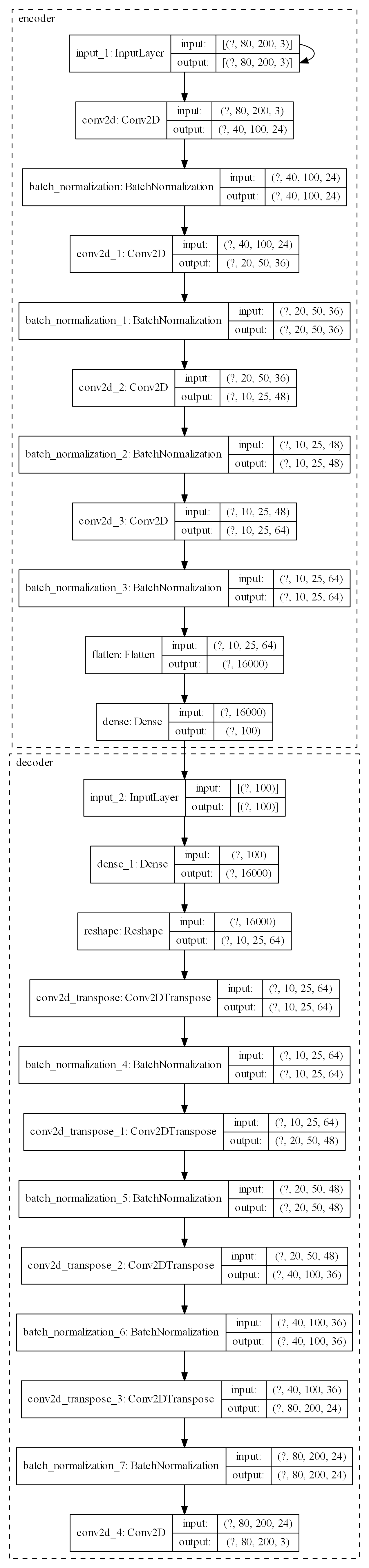

Training the Neural Network - DriveNet is used as the convolutional neural network for autonomous driving. CrashNet, an autoencoder, is used to for anomaly detection during autonomous driving.

- Train model is used to train DriveNet over a max of 100 epochs.

- Train CrashNet is used to train CrashNet.

- Training is regulated by EarlyStopping Callback, monitoring validation_loss with a patience of 3 epochs.

- Adam optimizer is used, with learning rate set to 0.001

- No data augmentation is done.

-

Testing the model - Final testing done in the game.

- Test model is used to actually run the trained model and control the car real-time. For CrashNet, a threshold of 0.0095 is used for anomaly detection. (Reconstruction loss - Mean Squared Error)

Graph of DriveNet, rendered using plot_model function.

- NOTE: This architecture is heavily inspired by the paper "Variational End-to-End Navigation and Localization" by Alexander Amini and others. Refer to the citation section for more details.

Graph of CrashNet, rendered using plot_model function.

Pipeline is as follows:

- Convert image to grayscale

- Apply Gaussian Blur

- Canny Edge Detection (threshold values calculated automatically)

- Masking region of interest

- Probabilistic Hough Transform

- Selecting 2 lines(lanes) averaged over

- If both lines have negative slope, go right

- If both lines have positive slope, go left

- If both lines have different slope, go straight

NOTE: This pipeline is almost similar to the one used in Sentdex's Python plays GTA-V. Refer to the citations section for more details.

- MIT - Intro to Deep Learning Course

- Variational End-to-End Navigation and Localization

- Sentex's Python Plays GTA-V

- AlexNet

- Use .hdf5 files instead of .npy files for better memory utilization.

- Switch to PyTorch if dealing with .hdf5 datasets.

- Implement some sort of reinforcement learning algorithm to avoid collecting data.

- Merge LaneFinder, DriveNet and CrashNet for better driving.

- Carla

- TORCS

- VDrift

- Beam.ng

- Add a control filter for the output, maybe a low pass filter

- Vagrant multiple VM - run the game with different camera views

- Use a cheat engine that tracks car's relative position, speed, angle, etc.

- Have analog control with a PID controller

- Have a pre-trained depth estimator model, use the depth map as features, and keypresses as labels.

Open to suggestions. Feel free to fork this repository. If you would to use some code from here, please do give the required citations and references.