Liyuan Zhu1, Shengyu Huang2, Konrad Schindler2, Iro Armeni1

CVPR 2024

1Stanford University, 2ETH Zurich

This repository represents the official implementation of this paper.

The code has been tested on Ubuntu 22.04, Intel 13900K, Nvidia 4090 24 GB/A100 80G.

conda create -n livingscenes python=3.9 -y

conda activate livingscenes

Feel free to adapt the package versions based on your hardware setting.

conda install pytorch==1.13.0 torchvision==0.14.0 torchaudio==0.13.0 pytorch-cuda=11.6 -c pytorch -c nvidia -y

conda install -c fvcore -c iopath -c conda-forge fvcore iopath -y

conda install -c bottler nvidiacub -y

conda install pytorch3d=0.7.4 -c pytorch3d -y

conda install pyg -c pyg -y

pip install cython

cd lib_shape_prior

python setup.py build_ext --inplace

pip install -U python-pycg[all] -f https://pycg.huangjh.tech/packages/index.html

pip install -r requirements.txt

If your hardware is the same as the tested ones, just run install.sh.

We generate the training data following EFEM. It will take three steps:

- Make the ShapeNet mesh watertight

- Generate SDF samples from the watertight mesh

- Render depth maps and back-project them to point clouds

We provide the script to process the mesh: https://github.com/Zhu-Liyuan/mesh_processing.

The FlyingShape dataset is generated from ShapeNet. [download link].

Please download the dataset from the original repository https://github.com/WaldJohannaU/3RScan. The authors provide tools to process the raw data.

We use the raw RGB-D measurements and back-project the depth images to get the point cloud of the scene. We also filter out the background.

When you finish downloading the dataset, change the root_path in configs/3rscan.yaml based on where you put the data on your machine.

To train the VN encoder-decoder network on ShapeNet data, run

cd lib_shape_prior

python run.py --config configs/3rscan/dgcnn_attn_inner.yaml

We also provide the pretrained weight from our paper in weight folder. You should be able to reproduce similar performance in the paper.

To evalaute performance on 3RScan, run:

python eval_3rscan.py

To evaluate performance on FlyingShape, run:

python eval_flyingshape.py

Thanks to the authors of Mask3D, you can use their updated code to get predicted segmentation of your point cloud and run LivingScenes on it. [https://github.com/cvg/Mask3D]

If you have any question, please contact Liyuan Zhu (liyuan.zhu@stanford.edu).

Our implementation on the shape prior training part heavily relies on EFEM and Vector Neurons and we thank the authors for open sourcing their code and their insightful discussion at the early stage of this project. We also thank Francis Engelmann for providing the updated Mask3D. So please cite them as well.

If you find our code and paper useful, please cite

@inproceedings{zhu2023living,

author = {Liyuan Zhu and Shengyu Huang and Konrad Schindler, Iro Armeni},

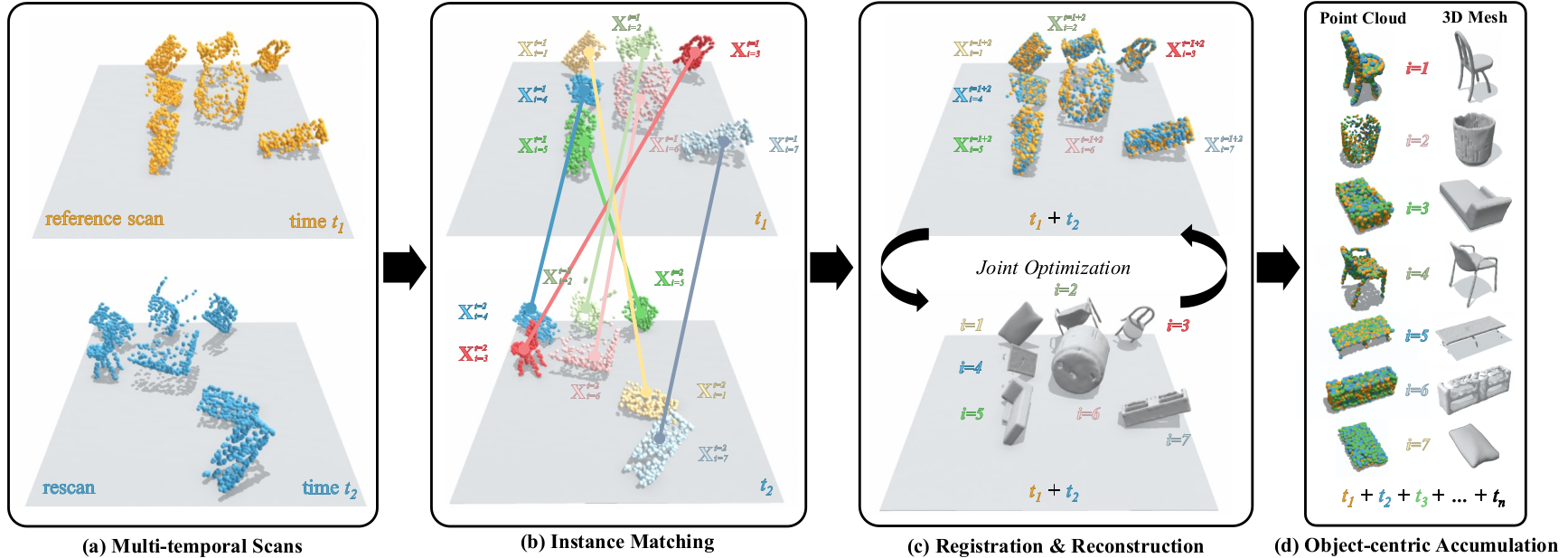

title = {Living Scenes: Multi-object Relocalization and Reconstruction in Changing 3D Environments},

booktitle = {The IEEE Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2024}

}