| Sequence | # Robots | Traversal (m) | Duration (min) |

|---|---|---|---|

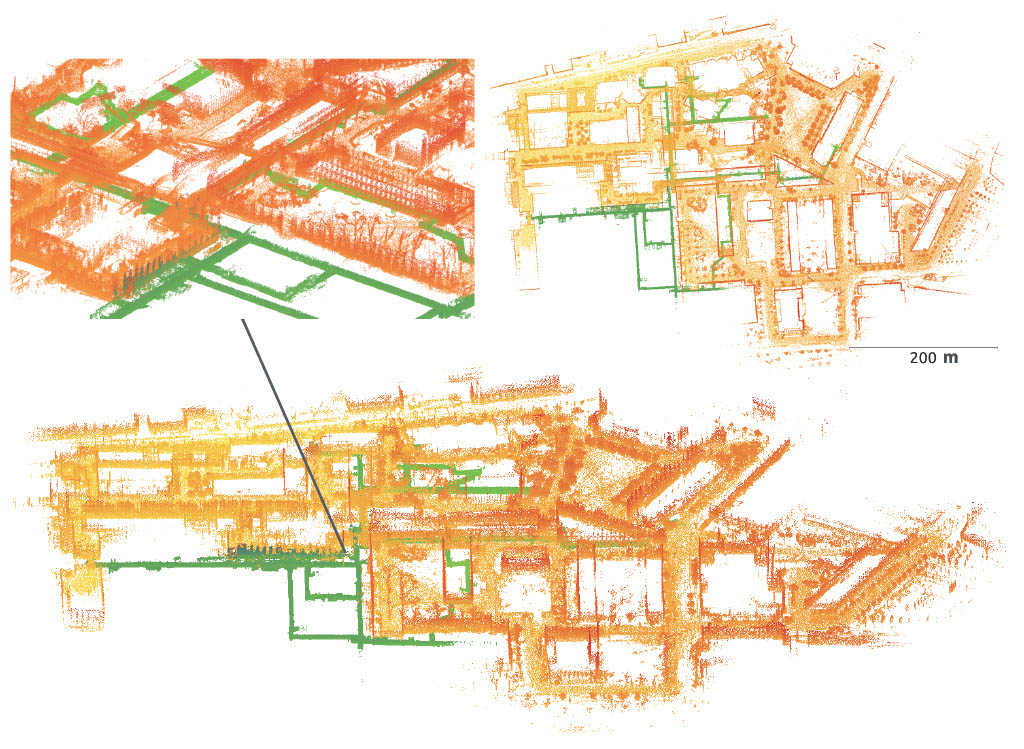

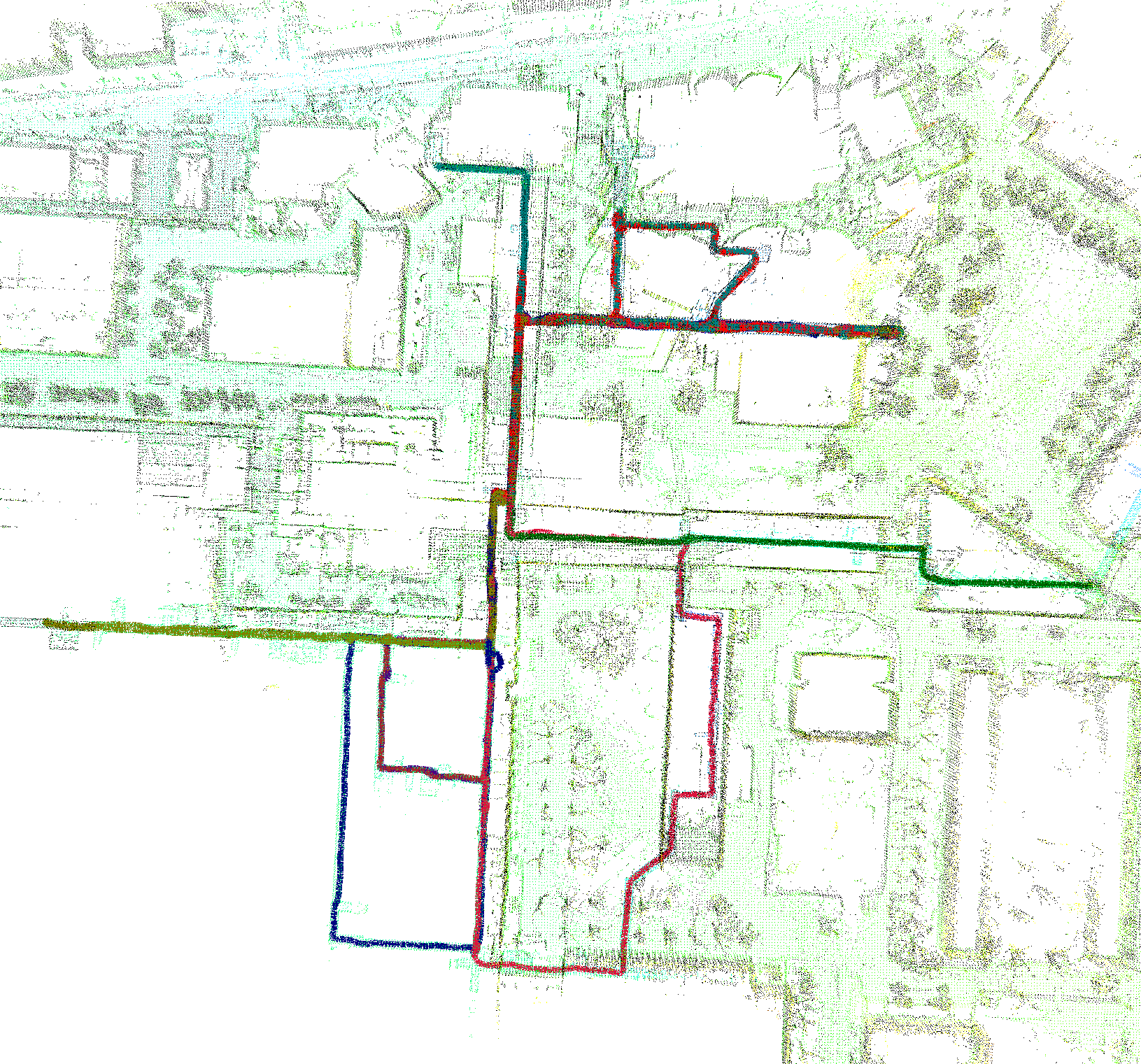

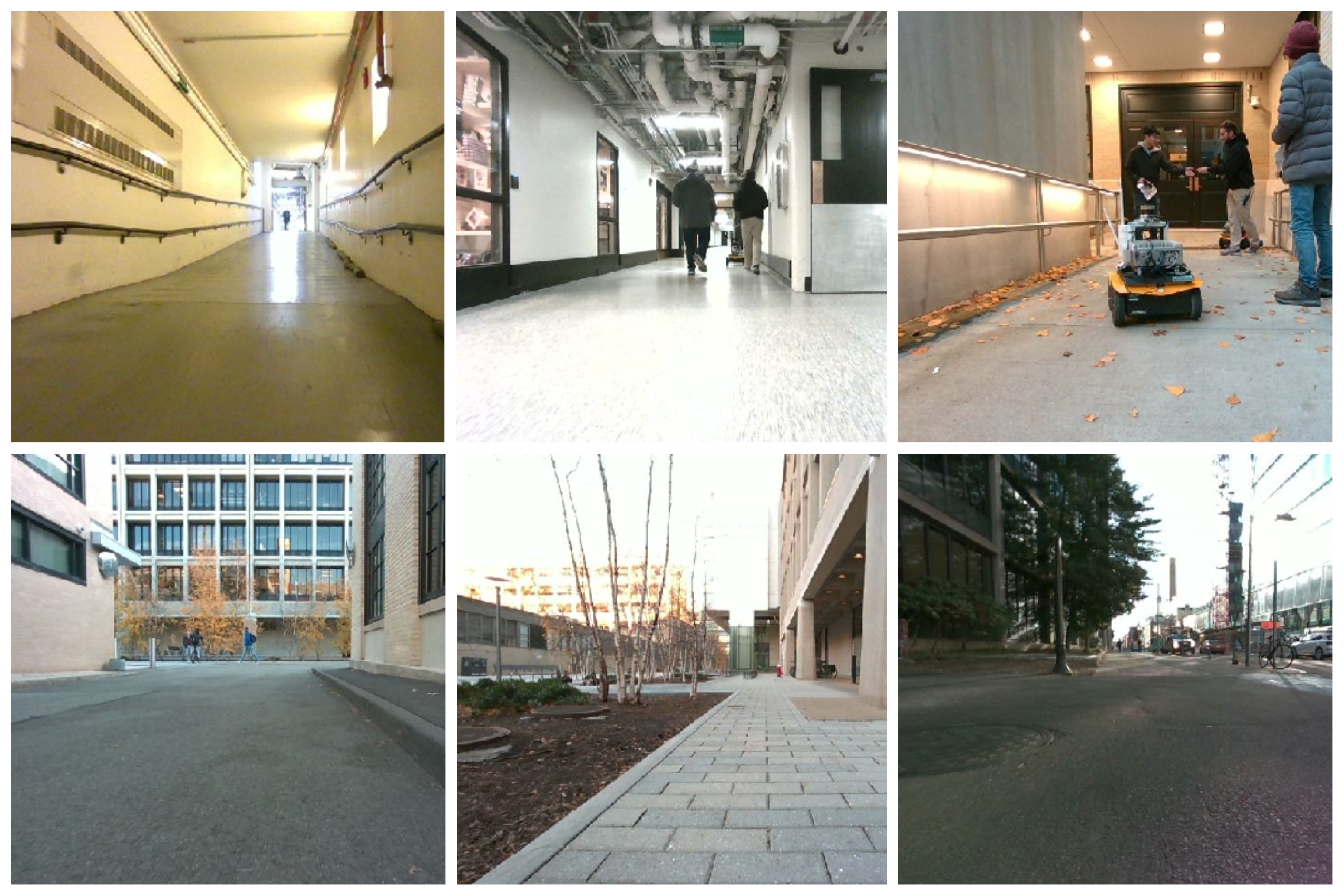

| Campus-Outdoor | 6 | 6044 | 19 |

| Campus-Tunnels | 8 | 6753 | 28 |

| Campus-Hybrid | 8 | 7785 | 27 |

We use a single set of camera intrinsic and extrinsic parameters for all the robots. The parameters follow the Kimera-VIO format and can be downloaded below.

The datasets are in compressed rosbag format. For best results, decompress the rosbags before usage.

rosbag decompress *.bag| Topic | Type | Description |

|---|---|---|

| /xxx/forward/color/image_raw/compressed | sensor_msgs/CompressedImage | RGB Image from D455 |

| /xxx/forward/color/camera_info | sensor_msgs/CameraInfo | RGB Image Camera Info |

| /xxx/forward/depth/image_rect_raw | sensor_msgs/Image | Depth Image from D455 |

| /xxx/forward/depth/camera_info | sensor_msgs/CameraInfo | Depth Image Camera Info |

| /xxx/forward/infra1/image_rect_raw/compressed | sensor_msgs/CompressedImage | Compressed Gray Scale Stereo Left |

| /xxx/forward/infra1/camera_info | sensor_msgs/CameraInfo | Stereo Left Camera Info |

| /xxx/forward/infra2/image_rect_raw/compressed | sensor_msgs/CompressedImage | Compressed Gray Scale Stereo Right |

| /xxx/forward/infra2/camera_info | sensor_msgs/CameraInfo | Stereo Right Camera Info |

| /xxx/forward/imu | sensor_msgs/Imu | IMU from D455 |

| /xxx/jackal_velocity_controller/odom | nav_msgs/Odometry | Wheel Odometry |

| /xxx/lidar_points | sensor_msgs/PointCloud2 | Lidar Point Cloud |

The ground truth trajectory is generated using GPS and total-station assisted LiDAR SLAM based on LOCUS and LAMP. The process is described in further detail in our paper. You can download the ground truth trajectory and reference point cloud below.

If you found the dataset to be useful, we would appreciate it if you can cite the following paper:

- Y. Tian, Y. Chang, L. Quang, A. Schang, C. Nieto-Granda, J. P. How, and L. Carlone, "Resilient and Distributed Multi-Robot Visual SLAM: Datasets, Experiments, and Lessons Learned," arXiv preprint arXiv:2304.04362, 2023.

@ARTICLE{tian23arxiv_kimeramultiexperiments,

author={Yulun Tian and Yun Chang and Long Quang and Arthur Schang and Carlos Nieto-Granda and Jonathan P. How and Luca Carlone},

title={Resilient and Distributed Multi-Robot Visual SLAM: Datasets, Experiments, and Lessons Learned},

year={2023},

eprint={2304.04362},

archivePrefix={arXiv},

primaryClass={cs.RO}

}| Name | Rosbags | GT | Photos | Trajectory |

|---|---|---|---|---|

| Campus-Outdoor | request | link |  |

|

| Campus-Tunnels | request | link |  |

|

| Campus-Hybrid | request | link |  |

|

The camera calibration parameters used for our experiments can be found here.

The point cloud of the reference ground truth map can be downloaded here.

Descriptions of LiDAR Sensor Configuration

For 10_14 sequences (i.e., campus_outdoor_1014_compressed in the shared drive), LiDAR point clouds are acquired by Velodyne VLP-16.

For 12_07 and 12_08 sequences (i.e., campus_tunnels_1207_compressed and campus_hybrid_1208_compressed, respectively), some of the robots have different LiDAR setups.

apis, sobek, and thoth sequences are acquired by OS1-64 Gen1 LiDAR sensors, which have a different hardware configuration from the recent OS1-64 sensors, while other robots have Velodyne VLP-16 sensors.

The extrinsics can be found here (but we appreciate your kind understanding that these extrinsics are not perfect. We are always open to contributions to our Kimera-Multi dataset!).