Facemap is a framework for predicting neural activity from mouse orofacial movements. It includes a pose estimation model for tracking distinct keypoints on the mouse face, a neural network model for predicting neural activity using the pose estimates, and also can be used compute the singular value decomposition (SVD) of behavioral videos.

Please find the detailed documentation at facemap.readthedocs.io.

To learn about Facemap, read the paper or check out the tweet thread. For support, please open an issue.

- For latest released version (from PyPI) including svd processing only, run

pip install facemapfor headless version orpip install facemap[gui]for using GUI. Note:pip install facemapnot yet available for latest tracker and neural model, instead install withpip install git+https://github.com/mouseland/facemap.git

If you use Facemap, please cite the Facemap paper:

Syeda, A., Zhong, L., Tung, R., Long, W., Pachitariu, M.*, & Stringer, C.* (2024). Facemap: a framework for modeling neural activity based on orofacial tracking. Nature Neuroscience, 27(1), 187-195.

[bibtex]

If you use the SVD computation or pupil tracking components, please also cite our previous paper:

Stringer, C.*, Pachitariu, M.*, Steinmetz, N., Reddy, C. B., Carandini, M., & Harris, K. D. (2019). Spontaneous behaviors drive multidimensional, brainwide activity. Science, 364(6437), eaav7893.

[bibtex]

The MATLAB version of the GUI is no longer supported (see old documentation).

Logo was designed by Atika Syeda and Tzuhsuan Ma.

Please follow the video tutorial for instructions on how to use Facemap or read the instructions below.

If you have an older facemap environment you can remove it with conda env remove -n facemap before creating a new one.

If you are using a GPU, make sure its drivers and the cuda libraries are correctly installed.

- Install an Anaconda distribution of Python. Note you might need to use an anaconda prompt if you did not add anaconda to the path.

- Open an anaconda prompt / command prompt which has

condafor python 3 in the path - Create a new environment with

conda create --name facemap python=3.8. We recommend python 3.8, but python 3.9 and 3.10 will likely work as well. - To activate this new environment, run

conda activate facemap - To install the minimal version of facemap, run

python -m pip install facemap. - To install facemap and the GUI, run

python -m pip install facemap[gui]. If you're on a zsh server, you may need to use ' ' around the facemap[gui] call: `python -m pip install 'facemap[gui]'.

To upgrade facemap (package here), run the following in the environment:

python -m pip install facemap --upgradeNote you will always have to run conda activate facemap before you run facemap. If you want to run jupyter notebooks in this environment, then also pip install notebook and python -m pip install matplotlib.

You can also try to install facemap and the GUI dependencies from your base environment using the command

python -m pip install facemap[gui]If you have issues with installation, see the docs for more details. You can also use the facemap environment file included in the repository and create a facemap environment with conda env create -f environment.yml which may solve certain dependency issues.

If these suggestions fail, open an issue.

If you plan on running many images, you may want to install a GPU version of torch (if it isn't already installed).

Before installing the GPU version, remove the CPU version:

pip uninstall torch

Follow the instructions here to determine what version to install. The Anaconda install is strongly recommended, and then choose the CUDA version that is supported by your GPU (newer GPUs may need newer CUDA versions > 10.2). For instance this command will install the 11.3 version on Linux and Windows (note the torchvision and torchaudio commands are removed because facemap doesn't require them):

conda install pytorch==1.12.1 cudatoolkit=11.3 -c pytorch

and this will install the 11.7 toolkit

conda install pytorch pytorch-cuda=11.7 -c pytorch

Facemap supports grayscale and RGB movies. The software can process multi-camera videos for pose tracking and SVD analysis. Please see example movies for testing the GUI. Movie file extensions supported include:

'.mj2','.mp4','.mkv','.avi','.mpeg','.mpg','.asf'

For more details, please refer to the data acquisition page.

For any issues or questions about Facemap, please open an issue. Please find solutions to some common issues below:

The models will be downloaded automatically from our website when you first run Facemap for processing keypoints. If download of pretrained models fails, please try the following:

- to resolve certificate error try:

pip install –upgrade certifi, or - download the pretrained model files: model state and model parameters and place them in the

modelssubfolder of the hiddenfacemapfolder located in home directory. Path to the hidden folder is:C:\Users\your_username\.facemap\modelson Windows and/home/your_username/.facemap/modelson Linux and Mac.

To get started, run the following command in terminal to open the GUI:

python -m facemap

Click "File" and load a single video file ("Load video"), or click "Load multiple videos" to choose a folder from which you can select movies to run. The video(s) will pop up in the left side of the GUI. You can zoom in and out with the mouse wheel, and you can drag by holding down the mouse. Double-click to return to the original, full view.

Next you can extract information from the videos like track keypoints, compute movie SVDs, track pupil size etc. Also you can load in neural activity and predict it from these extracted features.

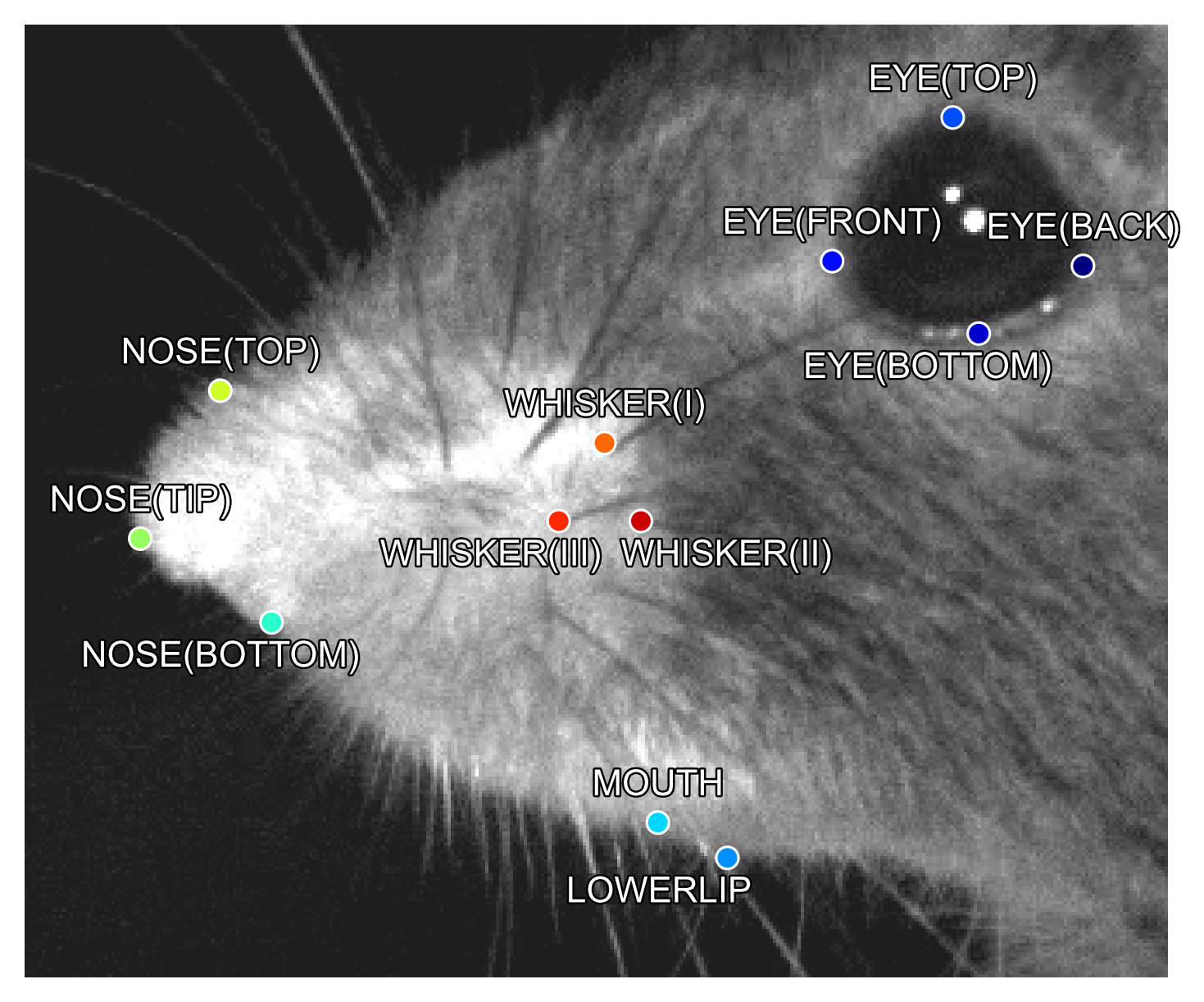

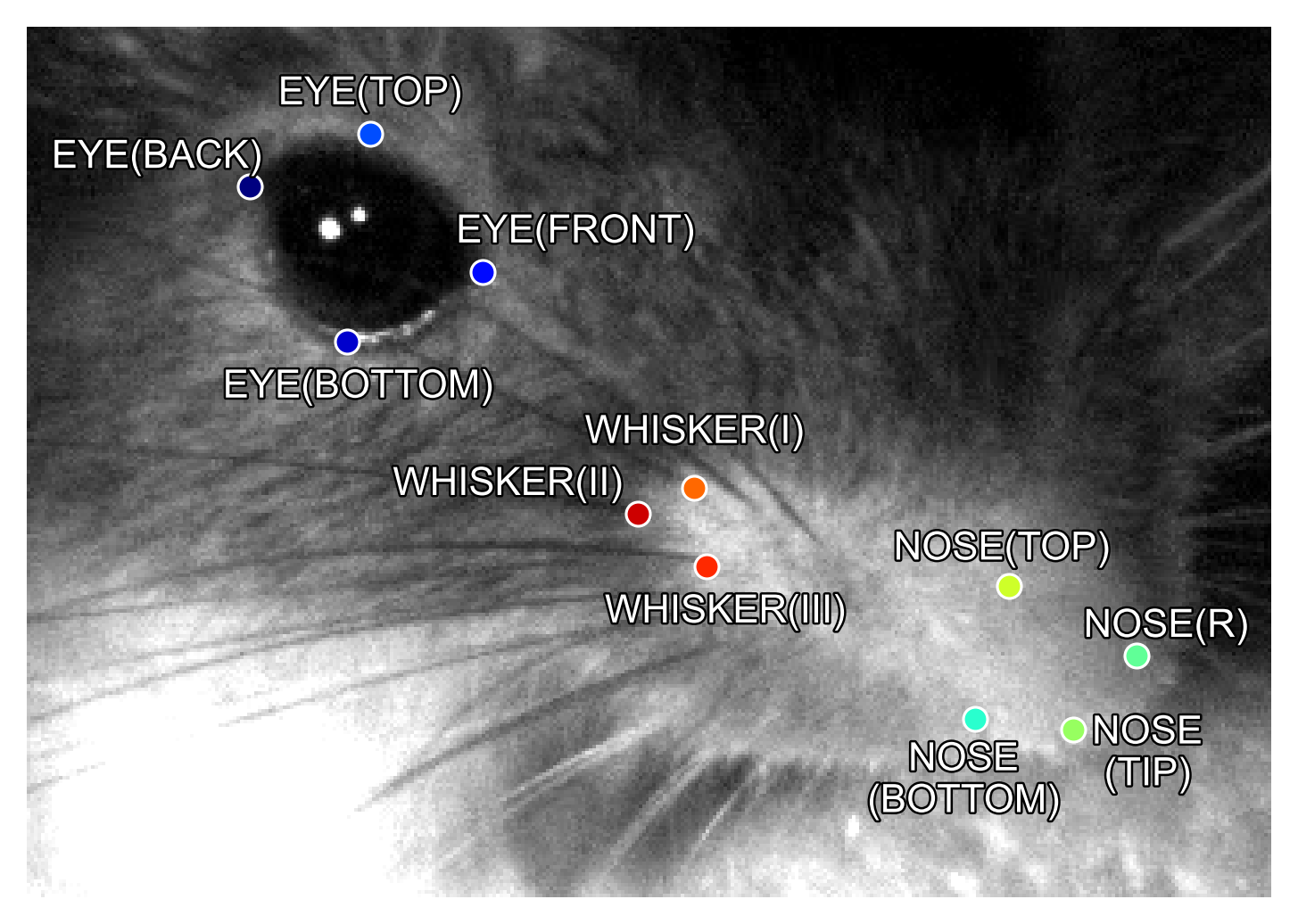

Facemap provides a trained network for tracking distinct keypoints on the mouse face from different camera views (some examples shown below). Check the keypoints box then click process. Next a bounding box will appear -- focus this on the face as shown below. Then the processed keypoints *.h5 file will be saved in the output folder along with the corresponding metadata file *.pkl.

Keypoints will be predicted in the selected bounding box region so please ensure the bounding box focuses on the face. See example frames here.

For more details on using the tracker, please refer to the GUI Instructions. Check out the notebook for processing keypoints in colab.

Facemap aims to provide a simple and easy-to-use tool for tracking mouse orofacial movements. The tracker's performance for new datasets could be further improved by expand our training set. You can contribute to the model by sharing videos/frames on the following email address(es): asyeda1[at]jh.edu or stringerc[at]janelia.hhmi.org.

Facemap allows pupil tracking, blink tracking and running estimation, see more details here. Also, Facemap can compute the singular value decomposition (SVD) of ROIs on single and multi-camera videos. SVD analysis can be performed across static frames called movie SVD (movSVD) to extract the spatial components or over the difference between consecutive frames called motion SVD (motSVD) to extract the temporal components of the video. The first 500 principal components from SVD analysis are saved as output along with other variables.

You can draw ROIs to compute the motion/movie SVD within the ROI, and/or compute the full video SVD by checking multivideo. Then check motSVD and/or movSVD and click process. The processed SVD *_proc.npy (and optionally *_proc.mat) file will be saved in the output folder selected.

For more details see SVD python tutorial or SVD MATLAB tutorial.

(video with old install instructions)

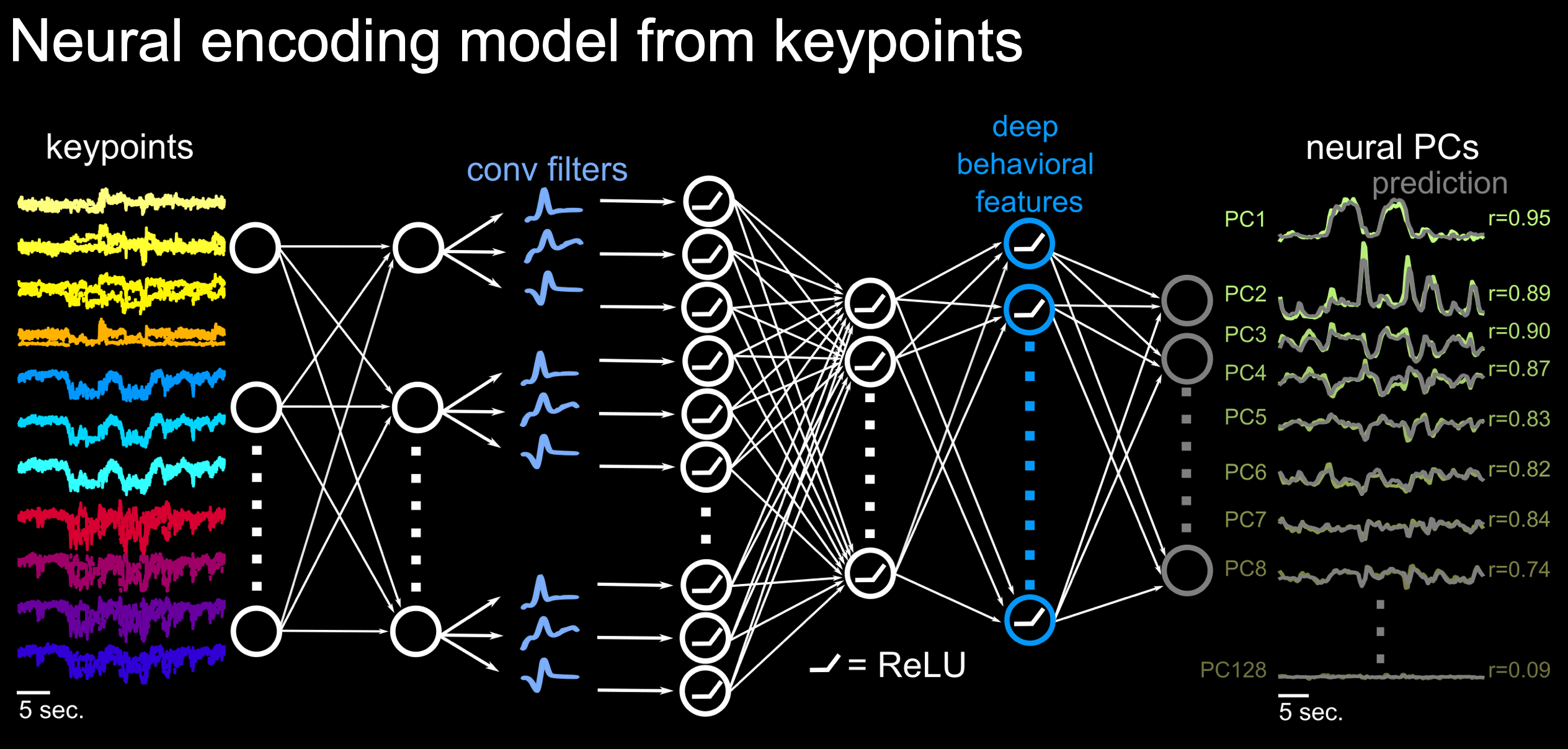

Facemap includes a deep neural network encoding model for predicting neural activity or principal components of neural activity from mouse orofacial pose estimates extracted using the tracker or SVDs.

The encoding model used for prediction is described as follows:

Please see neural activity prediction tutorial for more details.