Visual Point Cloud Forecasting enables Scalable Autonomous Driving [CVPR 2024 Highlight]

Zetong Yang, Li Chen, Yanan Sun, and Hongyang Li

- Presented by OpenDriveLab at Shanghai AI Lab

- 📬 Primary contact: Zetong Yang ( tomztyang@gmail.com )

- arXiv paper | Video (YouTube, 5min) | Tutorial on World Model (Bilibili)

- CVPR 2024 Autonomous Deiving Challenge - Predictive World Model

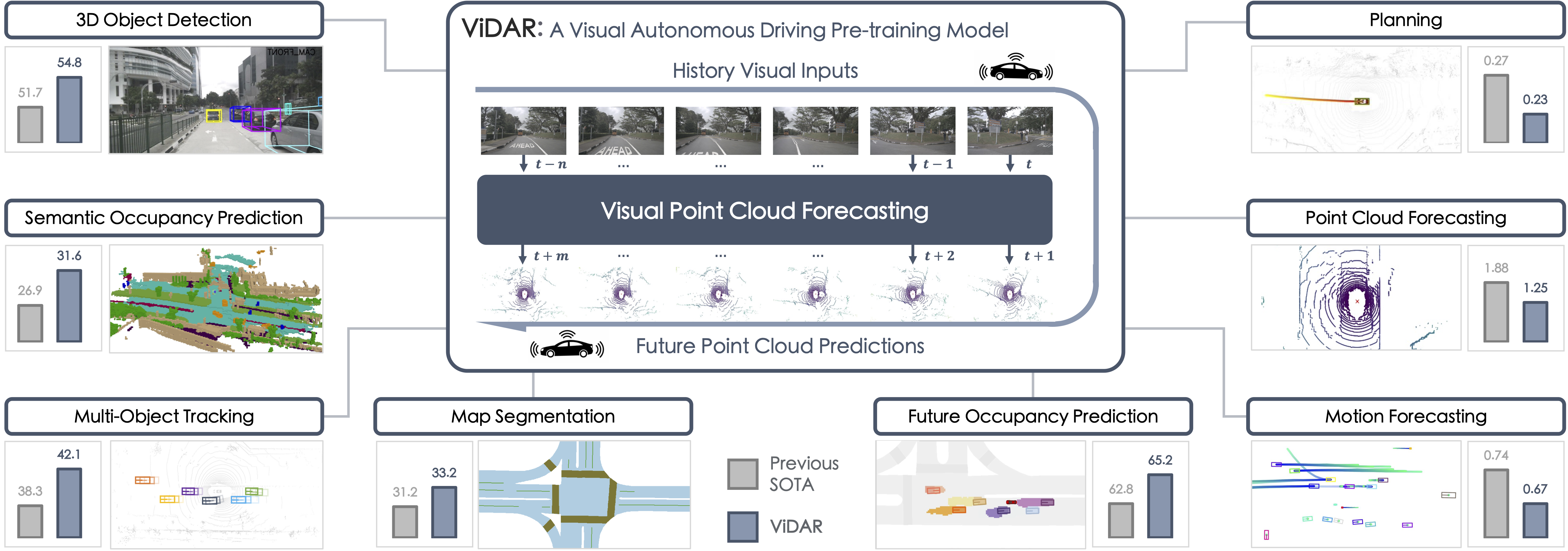

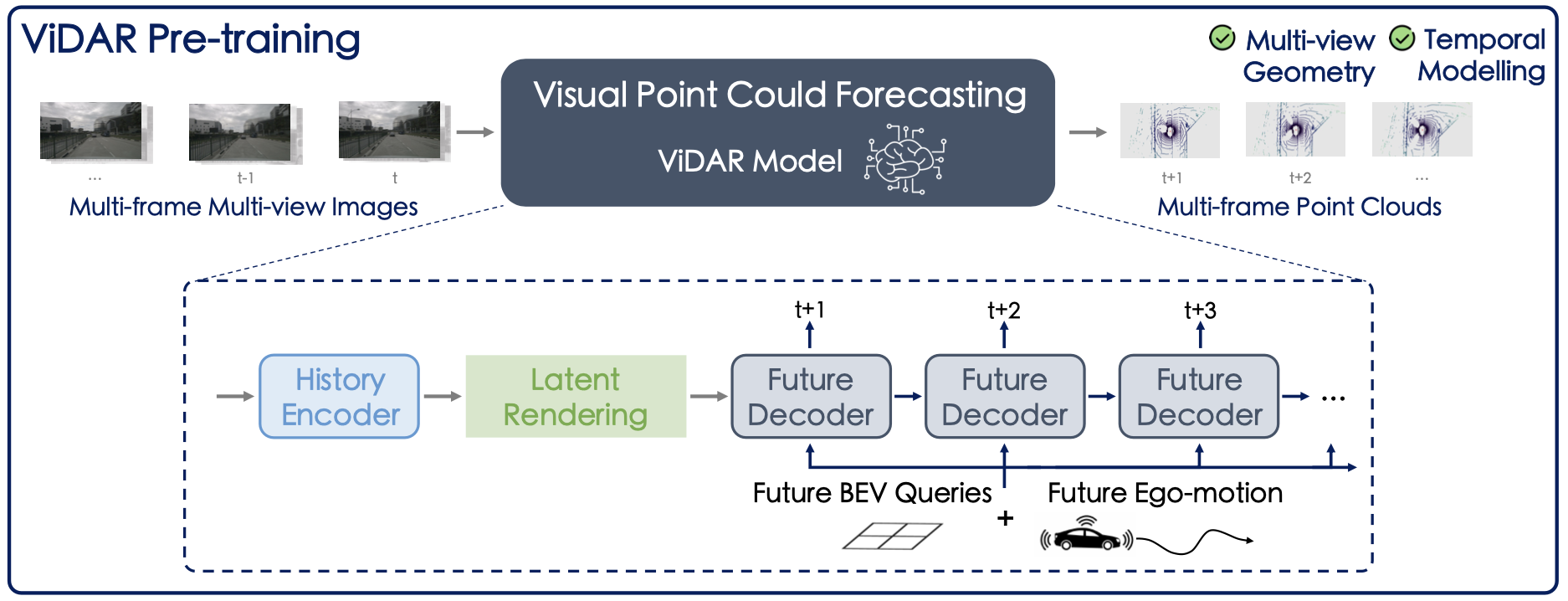

🔥 Visual point cloud forecasting, a new self-supervised pre-training task for end-to-end autonomous driving, predicting future point clouds from historical visual inputs, joint modeling the 3D geometry and temporal dynamics for simultaneous perception, prediction, and planning.

🌟 ViDAR, the first visual point cloud forecasting architecture.

🏆 Predictive world model, in the form of visual point cloud forecasting, will be a main track in the CVPR 2024 Autonomous Driving Challenge. Please stay tuned for further details!

[2024/4]🔥 ViDAR-pretraining on End-to-End Autonomous Driving (UniAD) is released. Please refer to ViDAR-UniAD Page for more information.[2024/4]🔥 ViDAR-pretraining on nuScenes-fullset is released. Please check the configs for pre-training and fine-tuning. Corresponding models are available at pre-trained and fine-tuned.[2024/3]🔥 Predictive world model challenge is launched. Please refer to the link for more details.[2024/2]ViDAR code and models initially released.[2024/2]ViDAR is accepted by CVPR 2024.[2023/12]ViDAR paper released.

Still in progress:

- ViDAR-nuScenes-1/8 training and BEVFormer fine-tuning configurations.

- ViDAR-OpenScene-mini training configurations. (Welcome joining predictive world model challenge!)

- ViDAR-nuScenes-full training and BEVFormer full fine-tuning configurations.

- UniAD fine-tuning code and configuration.

- Results and Model Zoo

- Installation

- Prepare Datasets

- Train and Evaluate

- License and Citation

- Related Resources

NuScenes Dataset:

| Pre-train Model | Dataset | Config | CD@1s | CD@2s | CD@3s | models & logs |

|---|---|---|---|---|---|---|

| ViDAR-RN101-nus-1-8-1future | nuScenes (12.5% Data) | vidar-nusc-pretrain-1future | - | - | - | models / logs |

| ViDAR-RN101-nus-1-8-3future | nuScenes (12.5% Data) | vidar-nusc-pretrain-3future | 1.25 | 1.48 | 1.79 | models / logs |

| ViDAR-RN101-nus-full-1future | nuScenes (100% Data) | vidar-nusc-pretrain-1future | - | - | - | models |

- HINT: For running ViDAR on the nuScenes-full set, please run

python tools/merge_nusc_fullset_pkl.pybefore to generate the nuscenes_infos_temporal_traintest.pkl for pre-training.

OpenScene Dataset:

| Pre-train Model | Dataset | Config | CD@1s | CD@2s | CD@3s | models & logs |

|---|---|---|---|---|---|---|

| ViDAR-RN101-OpenScene-3future | OpenScene-mini (12.5% Data) | vidar-OpenScene-pretrain-3future-1-8 | 1.41 | 1.57 | 1.78 | models / logs |

| ViDAR-RN101-OpenScene-3future | OpenScene-mini-Full (100% Data) | vidar-OpenScene-pretrain-3future-full | 1.03 | 1.15 | 1.35 | models / logs |

| Downstream Model | Dataset | pre-train | Config | NDS | mAP | models & logs |

|---|---|---|---|---|---|---|

| BEVFormer-Base (baseline) | nuScenes (25% Data) | FCOS3D | bevformer-base | 43.40 | 35.47 | models / logs |

| BEVFormer-Base | nuScenes (25% Data) | ViDAR-RN101-nus-1-8-1future | vidar-nusc-finetune-1future | 45.77 | 36.90 | models / logs |

| BEVFormer-Base | nuScenes (25% Data) | ViDAR-RN101-nus-1-8-3future | vidar-nusc-finetune-3future | 45.61 | 36.84 | models / logs |

| BEVFormer-Base(baseline) | nuScenes (100% Data) | FCOS3D | bevformer-base | 51.7 | 41.6 | models |

| BEVFormer-Base | nuScenes (100% Data) | ViDAR-RN101-nus-full-1future | vidar-nusc-finetune-1future | 55.33 | 45.20 | models |

Please refer to ViDAR-UniAD page.

The installation step is similar to BEVFormer. For convenience, we list the steps below:

conda create -n vidar python=3.8 -y

conda activate vidar

pip install torch==1.10.1+cu111 torchvision==0.11.2+cu111 torchaudio==0.10.1 -f https://download.pytorch.org/whl/cu111/torch_stable.html

conda install -c omgarcia gcc-6 # (optional) gcc-6.2Install mm-series packages.

pip install mmcv-full==1.4.0

pip install mmdet==2.14.0

pip install mmsegmentation==0.14.1

# Install mmdetection3d from source codes.

git clone https://github.com/open-mmlab/mmdetection3d.git

cd mmdetection3d

git checkout v0.17.1 # Other versions may not be compatible.

python setup.py installInstall Detectron2 and Timm.

pip install einops fvcore seaborn iopath==0.1.9 timm==0.6.13 typing-extensions==4.5.0 pylint ipython==8.12 numpy==1.19.5 matplotlib==3.5.2 numba==0.48.0 pandas==1.4.4 scikit-image==0.19.3 setuptools==59.5.0

python -m pip install 'git+https://github.com/facebookresearch/detectron2.git'Setup ViDAR project.

git clone https://github.com/OpenDriveLab/ViDAR

cd ViDAR

mkdir pretrained

cd pretrained & wget https://github.com/zhiqi-li/storage/releases/download/v1.0/r101_dcn_fcos3d_pretrain.pth

# Install chamferdistance library.

cd third_lib/chamfer_dist/chamferdist/

pip install .We recommand using 8 A100 GPUs for training. The GPU memory usage is around 63G while pre-training.

- HINT: To save GPU memory, you can change supervise_all_future=True to False, and use a smaller vidar_head_pred_history_frame_num and

vidar_head_pred_future_frame_num.

For example, by setting

supervise_all_future=False,vidar_head_pred_history_frame_num=0,vidar_head_pred_future_frame_num=0, andvidar_head_per_frame_loss_weight=(1.0,), the GPU memory consumption of vidar-pretrain-3future-model is reduced to ~34G. An example configuration is provided at link. - Full-nuScenes-Training: To pre-train ViDAR on the full nuScenes dataset, run

python tools/merge_nusc_fullset_pkl.pybefore, to generate the nuscenes_infos_temporal_traintest.pkl for pre-training.

CONFIG=path/to/config.py

GPU_NUM=8

./tools/dist_train.sh ${CONFIG} ${GPU_NUM}CONFIG=path/to/vidar_config.py

CKPT=path/to/checkpoint.pth

GPU_NUM=8

./tools/dist_test.sh ${CONFIG} ${CKPT} ${GPU_NUM}CONFIG=path/to/vidar_config.py

CKPT=path/to/checkpoint.pth

GPU_NUM=1

./tools/dist_test.sh ${CONFIG} ${CKPT} ${GPU_NUM} \

--cfg-options 'model._viz_pcd_flag=True' 'model._viz_pcd_path=/path/to/output'All assets and code are under the Apache 2.0 license unless specified otherwise.

If this work is helpful for your research, please consider citing the following BibTeX entry.

@inproceedings{yang2023vidar,

title={Visual Point Cloud Forecasting enables Scalable Autonomous Driving},

author={Yang, Zetong and Chen, Li and Sun, Yanan and Li, Hongyang},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

year={2024}

}We acknowledge all the open-source contributors for the following projects to make this work possible: