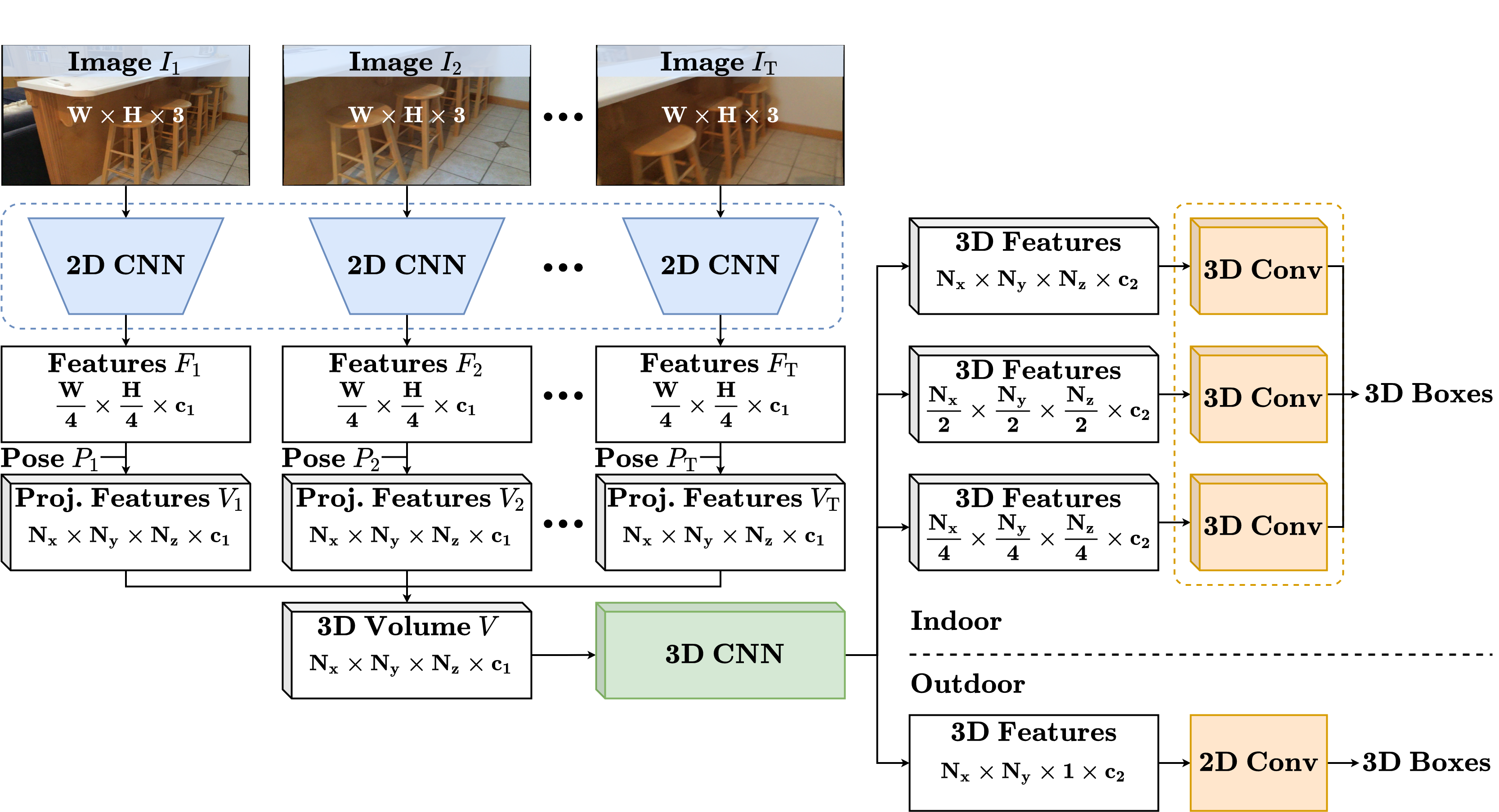

ImVoxelNet: Image to Voxels Projection for Monocular and Multi-View General-Purpose 3D Object Detection

News:

- 🔥 August, 2022.

ImVoxelNetforSUN RGB-Dis now supported in mmdetection3d. - 🔥 October, 2021. Our paper is accepted at WACV 2022. We simplify 3d neck to make indoor models much faster and accurate. For example, this improves

ScanNetmAPby more than 2%. Please find updated configs in configs/imvoxelnet/*_fast.py and models. - 🔥 August, 2021. We adapt center sampling for indoor detection. For example, this improves

ScanNetmAPby more than 5%. Please find updated configs in configs/imvoxelnet/*_top27.py and models. - 🔥 July, 2021. We update

ScanNetimage preprocessing both here and in mmdetection3d. - 🔥 June, 2021.

ImVoxelNetforKITTIis now supported in mmdetection3d.

This repository contains implementation of the monocular/multi-view 3D object detector ImVoxelNet, introduced in our paper:

ImVoxelNet: Image to Voxels Projection for Monocular and Multi-View General-Purpose 3D Object Detection

Danila Rukhovich, Anna Vorontsova, Anton Konushin

Samsung Research

https://arxiv.org/abs/2106.01178

For convenience, we provide a Dockerfile. Alternatively, you can install all required packages manually.

This implementation is based on mmdetection3d framework.

Please refer to the original installation guide install.md, replacing open-mmlab/mmdetection3d with saic-vul/imvoxelnet.

Also, rotated_iou should be installed with these 4 commands.

Most of the ImVoxelNet-related code locates in the following files:

detectors/imvoxelnet.py,

necks/imvoxelnet.py,

dense_heads/imvoxel_head.py,

pipelines/multi_view.py.

We support three benchmarks based on the SUN RGB-D dataset.

- For the VoteNet benchmark with 10 object categories, you should follow the instructions in sunrgbd.

- For the PerspectiveNet

benchmark with 30 object categories, the same instructions can be applied;

you only need to set

datasetargument tosunrgbd_monocularwhen runningcreate_data.py. - The Total3DUnderstanding

benchmark implies detecting objects of 37 categories along with camera pose and room layout estimation.

Download the preprocessed data as

train.json and

val.json

and put it to

./data/sunrgbd. Then run:python tools/data_converter/sunrgbd_total.py

For ScanNet please follow instructions in scannet. For KITTI and nuScenes, please follow instructions in getting_started.md.

Please see getting_started.md for basic usage examples.

Training

To start training, run dist_train with ImVoxelNet configs:

bash tools/dist_train.sh configs/imvoxelnet/imvoxelnet_kitti.py 8Testing

Test pre-trained model using dist_test with ImVoxelNet configs:

bash tools/dist_test.sh configs/imvoxelnet/imvoxelnet_kitti.py \

work_dirs/imvoxelnet_kitti/latest.pth 8 --eval mAPVisualization

Visualizations can be created with test script.

For better visualizations, you may set score_thr in configs to 0.15 or more:

python tools/test.py configs/imvoxelnet/imvoxelnet_kitti.py \

work_dirs/imvoxelnet_kitti/latest.pth --show \

--show-dir work_dirs/imvoxelnet_kittiv2 adds center sampling for indoor scenario. v3 simplifies 3d neck for indoor scenario. Differences are discussed in v2 and v3 preprints.

| Dataset | Object Classes | Version | Download |

|---|---|---|---|

| SUN RGB-D | 37 from Total3dUnderstanding |

v1 | mAP@0.15: 41.5 v2 | mAP@0.15: 42.7 v3 | mAP@0.15: 43.7 |

model | log | config model | log | config model | log | config |

| SUN RGB-D | 30 from PerspectiveNet |

v1 | mAP@0.15: 44.9 v2 | mAP@0.15: 47.2 v3 | mAP@0.15: 48.7 |

model | log | config model | log | config model | log | config |

| SUN RGB-D | 10 from VoteNet | v1 | mAP@0.25: 38.8 v2 | mAP@0.25: 39.4 v3 | mAP@0.25: 40.7 |

model | log | config model | log | config model | log | config |

| ScanNet | 18 from VoteNet | v1 | mAP@0.25: 40.6 v2 | mAP@0.25: 45.7 v3 | mAP@0.25: 48.1 |

model | log | config model | log | config model | log | config |

| KITTI | Car | v1 | AP@0.7: 17.8 | model | log | config |

| nuScenes | Car | v1 | AP: 51.8 | model | log | config |

If you find this work useful for your research, please cite our paper:

@inproceedings{rukhovich2022imvoxelnet,

title={Imvoxelnet: Image to voxels projection for monocular and multi-view general-purpose 3d object detection},

author={Rukhovich, Danila and Vorontsova, Anna and Konushin, Anton},

booktitle={Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision},

pages={2397--2406},

year={2022}

}