Code repository for "MM3DGS SLAM: Multi-modal 3D Gaussian Splatting for SLAM Using Vision, Depth, and Inertial Measurements".

Lisong C. Sun*

·

Neel P. Bhatt*

·

Jonathan C. Liu

·

Zhiwen Fan

Zhangyang Wang

·

Todd E. Humphreys

·

Ufuk Topcu

* Equal contribution and co-first authors

Project Page | Video | Paper | Dataset

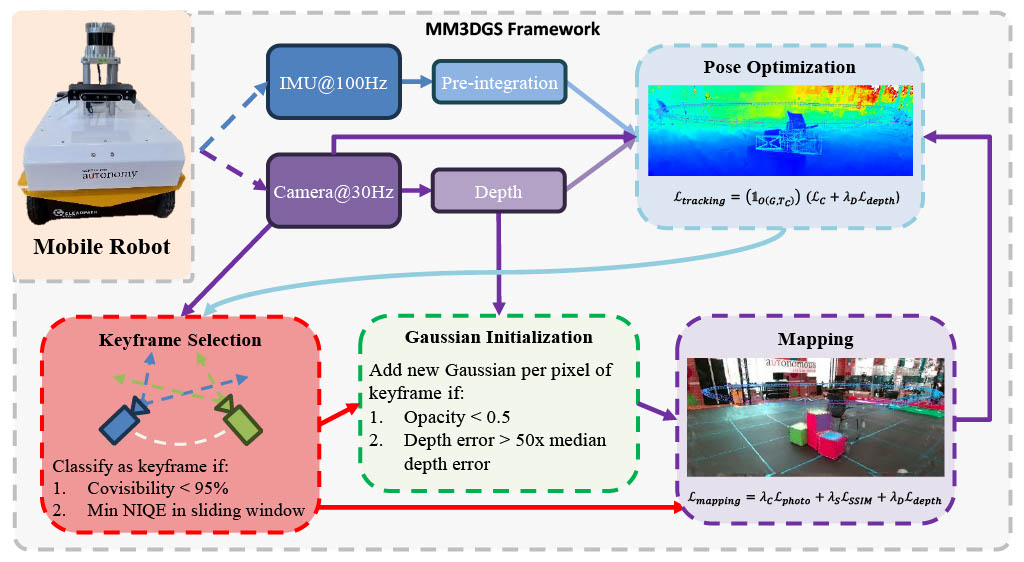

As shown above, we present the framework for Multi-modal 3D Gaussian Splatting for SLAM. We utilize inertial measurements, RGB images, and depth measurements to create a SLAM method using 3D Gaussians. Through the effort of integrating multiple modalities, our SLAM method performs with high tracking and mapping accuracy.

The repository contains submodules, thus please check it out with

git clone git@github.com:VITA-Group/MM3DGS-SLAM.git --recursiveThe simplest way to install dependencies is with anaconda

conda create -n mm3dgs python=3.10

conda activate mm3dgs

conda install -c "nvidia/label/cuda-11.8.0" cuda-toolkit

conda env update -f environment.ymlTo run MM3DGS, simply run the top level module with the path to the config file.

python slam_top.py --config <config path>Included in this repo are the configs for UT-MM and TUM-RGBD datasets. For example,

python slam_top.py --config ./configs/UTMM.ymlNote that the directory to the dataset must first be added to the config file before running, e.g.,

inputdir: /datasets/UTMM/ # Replace with the directory in which you downloaded the datasetOutputs, including any enabled debug, will be put in the ./output directory.

After running the above script, trajectory and image evaluation will automatically be recorded.

Trajectory error can be recalculated through scripts/eval_traj.py.

This script reads a results.npz file containing numpy arrays of estimate

and ground truth poses.

It also creates trajectory plots and animations from the paper.

python scripts/eval_traj.py --path <output path>Command Line Arguments for scripts/eval_traj.py

Path to the output directory containing a results.npz file

Add this flag to animate the plot

Image evaluation results can be recalculated with scripts/eval_image.py.

This script loads a saved checkpoint and renders the map from estimated poses,

comparing them with ground truth images from the dataset.

python scripts/eval_image.py -c <config path>Command Line Arguments for scripts/eval_image.py

Path to the config file

Optional. Path to the output directory if not defined in config file

Optional. Iteration checkpoint to evaluate if not defined in config file

Further, the output map can be visualized using scripts/visualizer.py

python scripts/visualizer.py -c <config path> -m <output path> -i <iteration>Command Line Arguments for scripts/visualizer.py

Path to the config file

Path to the output directory containing a results.npz file and point_cloud directory

Iteration number of the output

Add this flag to animate the visualizer along the trajectory path

To access our dataset, visit Hugging Face. To see videos of the content, please visit our [Project Page].

A script to convert the UT-MM rosbag data to usable .txt files is included in

scripts/bag2data.py. Note that ROS

is required to read the camera-to-imu transformation.

python scripts/bag2data.py --path <rosbag dir path> --scene <scene name>However, the transformation is constant between our datasets, which we have provided.

./tf/tf.txt

Command Line Arguments for scripts/bag2data.py

Path to the directory containing rosbags

Name of the scene to read

Combined, the datasets contain 8387 images, 2796 LiDAR scans, and 27971 IMU measurements. The following is a quick overview of the topics that can be found within each bag file:

/vrpn_client_node/Jackal_Latest/pose : geometry_msgs/PoseStamped (Pose Coordinates, 100hz)

/realsense/color/image_raw/compressed : sensor_msgs/CompressedImage (RGB Image, 30hz)

/realsense/depth/image_rect_raw : sensor_msgs/Image (Depth Image, 30hz)

/microstrain/imu/data : sensor_msgs/Imu (Imu Measurements, 100hz)

/ouster/points : sensor_msgs/PointCloud2 (LiDAR Point Clouds, 10hz)

To visualize the dataset, ROS is needed. Some scripts are provided in the UT_MM_Scripts directory.

roscore

rosrun rviz rviz -d UT_MM_Scripts/configs/jackal.rviz

rqt --clear-config --perspective-file UT_MM_Scripts/configs/rqt_plots.perspective

rosbag play --clock *.bag --pause

python3 imu_preintegration.py

If you find our paper useful or interesting, please consider giving a star ⭐ and citing the following paper 📝.

@misc{sun2024mm3dgs,

title={MM3DGS SLAM: Multi-modal 3D Gaussian Splatting for SLAM Using Vision, Depth, and Inertial Measurements},

author={Lisong C. Sun and Neel P. Bhatt and Jonathan C. Liu and Zhiwen Fan and Zhangyang Wang and Todd E. Humphreys and Ufuk Topcu},

year={2024},

eprint={2404.00923},

archivePrefix={arXiv},

primaryClass={cs.CV}

}