This repository is the official implementation of PCT.

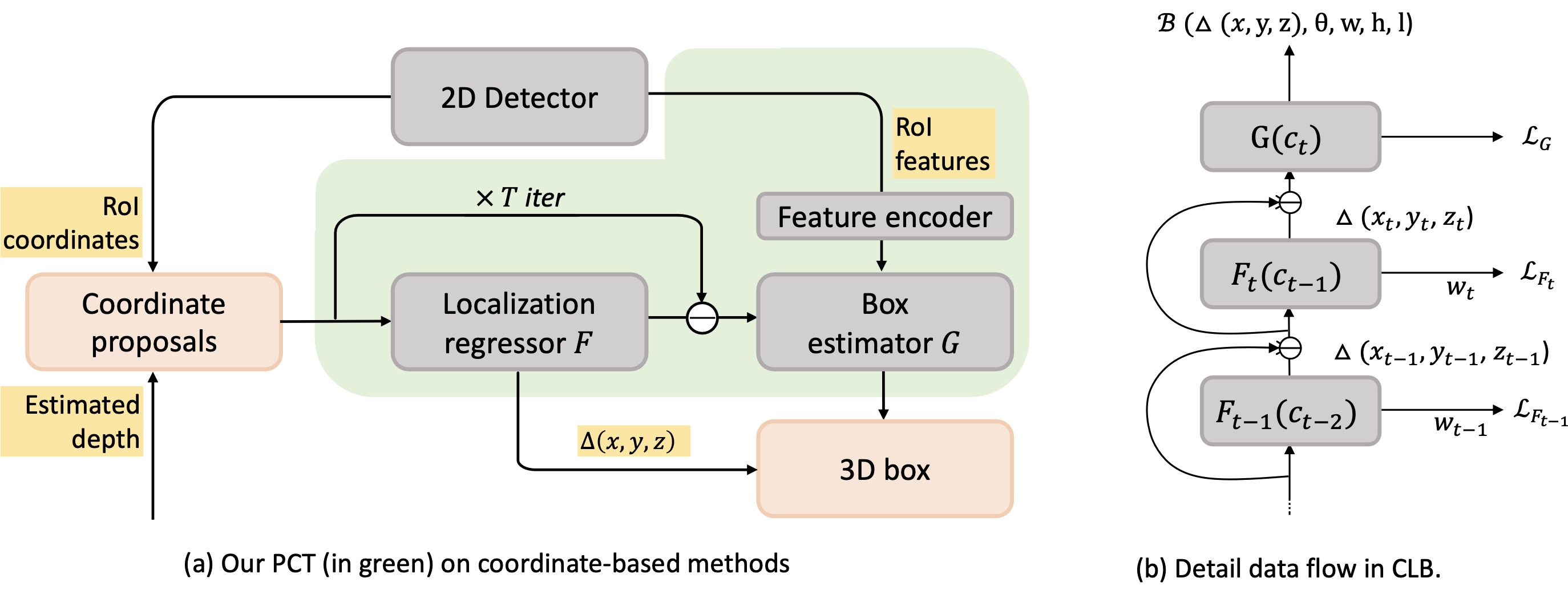

In this paper, we propose a novel and lightweight approach, dubbed Progressive Coordinate Transforms (PCT) to facilitate learning coordinate representations for monocular 3D object detection. Specifically, a localization boosting mechanism with confidence-aware loss is introduced to progressively refine the localization prediction. In addition, semantic image representation is also exploited to compensate for the usage of patch proposals. Despite being lightweight and simple, our strategy allows us to establish a new state-of-the-art among the monocular 3D detectors on the competitive KITTI benchmark. At the same time, our proposed PCT shows great generalization to most coordinate-based 3D detection frameworks.

Download this repository (tested under python3.7, pytorch1.3.1 and ubuntu 16.04.7). There are also some dependencies like cv2, yaml, tqdm, etc., and please install them accordingly:

cd #root

pip install -r requirementsThen, you need to compile the evaluation script:

cd root/tools/kitti_eval

sh compile.shFirst, you should download the KITTI dataset, and organize the data as follows (* indicates an empty directory to store the data generated in subsequent steps):

#ROOT

|data

|KITTI

|2d_detections

|ImageSets

|pickle_files *

|object

|training

|calib

|image_2

|label

|depth *

|pseudo_lidar (optional for Pseudo-LiDAR)*

|velodyne (optional for FPointNet)

|testing

|calib

|image_2

|depth *

|pseudo_lidar (optional for Pseudo-LiDAR)*

|velodyne (optional for FPointNet)

Second, you need to prepare your depth maps and put them to data/KITTI/object/training/depth. For ease of use, we also provide the estimated depth maps (these data generated from the pretrained models provided by DORN and Pseudo-LiDAR).

| Monocular (DORN) | Stereo (PSMNet) |

|---|---|

| trainval(~1.6G), test(~1.6G) | trainval(~2.5G) |

Then, you need to generate image 2D features for the 2D bounding boxes and put them to data/KITTI/pickle_files/org. We train the 2D detector according to the 2D detector in RTM3D. You can also use your own 2D detector for training and inference.

Finally, generate the training data using provided scripts :

cd #root/tools/data_prepare

python patch_data_prepare_val.py --gen_train --gen_val --gen_val_detection --car_only

mv *.pickle ../../data/KITTI/pickle_filesWe also provide Waymo Usage for monocular 3D detection.

Move to the workplace and train the mode (also need to modify the path of pickle files in config file):

cd #root

cd experiments/pct

python ../../tools/train_val.py --config config_val.yaml

Generate the results using the trained model:

python ../../tools/train_val.py --config config_val.yaml --eand evalute the generated results using:

../../tools/kitti_eval/evaluate_object_3d_offline_ap11 ../../data/KITTI/object/training/label_2 ./outputor

../../tools/kitti_eval/evaluate_object_3d_offline_ap40 ../../data/KITTI/object/training/label_2 ./outputwe provide the generated results for evaluation due to the tedious process of data preparation process. Unzip the output.zip and then execute the above evaluation commonds. Result is:

| Models | AP3D11@mod. | AP3D11@easy | AP3D11@hard |

|---|---|---|---|

| PatchNet + PCT | 27.53 / 34.65 | 38.39 / 47.16 | 24.44 / 28.47 |

This code benefits from the excellent work PatchNet, and use the off-the-shelf models provided by DORN and RTM3D.

@article{wang2021pct,

title={Progressive Coordinate Transforms for Monocular 3D Object Detection},

author={Li Wang, Li Zhang, Yi Zhu, Zhi Zhang, Tong He, Mu Li, Xiangyang Xue},

journal={arXiv preprint arXiv:2108.05793},

year={2021}

}

For questions regarding PCT-3D, feel free to post here or directly contact the authors (wangli16@fudan.edu.cn).

See CONTRIBUTING for more information.

This project is licensed under the Apache-2.0 License.