Official Repository of the paper: Latent Consistency Models: Synthesizing High-Resolution Images with Few-Step Inference.

Official Repository of the paper: LCM-LoRA: A Universal Stable-Diffusion Acceleration Module.

Project Page: https://latent-consistency-models.github.io

LCM Community: Join our LCM discord channels

LCM Community: Join our LCM discord channels

for discussions. Coders are welcome to contribute.

for discussions. Coders are welcome to contribute.

- (❤️New) 2023/11/10 Training Scripts are released!! Check here.

- (🤯New) 2023/11/10 Training-free acceleration LCM-LoRA is born! See our technical report here and Hugging Face blog here.

- (⚡️New) 2023/11/10 LCM has a major update! We release 3 LCM-LoRA (SD-XL, SSD-1B, SD-V1.5), see here.

- (🚀New) 2023/11/10 LCM has a major update! We release 2 Full Param-tuned LCM (SD-XL, SSD-1B), see here.

- (🔥New) 2023/11/10 We support LCM Inference with C# and ONNX Runtime now! Thanks to @saddam213! Check the link here.

- (🔥New) 2023/11/01 Real-Time Latent Consistency Models is out!! Github link here. Thanks @radames for the really cool Huggingface🤗 demo Real-Time Image-to-Image, Real-Time Text-to-Image. Twitter/X Link.

- (🔥New) 2023/10/28 We support Img2Img for LCM! Please refer to "🔥 Image2Image Demos".

- (🔥New) 2023/10/25 We have official LCM Pipeline and LCM Scheduler in 🧨 Diffusers library now! Check the new "Usage".

- (🔥New) 2023/10/24 Simple Streamlit UI for local use: See the link Thanks for @akx.

- (🔥New) 2023/10/24 We support SD-Webui and ComfyUI now!! Thanks for @0xbitches. See the link: SD-Webui and ComfyUI.

- (🔥New) 2023/10/23 Running on Windows/Linux CPU is also supported! Thanks for @rupeshs See the link.

- (🔥New) 2023/10/22 Google Colab is supported now. Thanks for @camenduru See the link: Colab

- (🔥New) 2023/10/21 We support local gradio demo now. LCM can run locally!! Please refer to the "Local gradio Demos".

- (🔥New) 2023/10/19 We provide a demo of LCM in 🤗 Hugging Face Space. Try it here.

- (🔥New) 2023/10/19 We provide the LCM model (Dreamshaper_v7) in 🤗 Hugging Face. Download here.

- (🔥New) 2023/10/19 LCM is integrated in 🧨 Diffusers library. Please refer to the "Usage".

We support Img2Img now! Try the impressive img2img demos here: Replicate, SD-webui, ComfyUI, Colab

Local gradio for img2img is on the way!

To run the model locally, you can download the "local_gradio" folder:

- Install Pytorch (CUDA). MacOS system can download the "MPS" version of Pytorch. Please refer to: https://pytorch.org. Install Intel Extension for Pytorch as well if you're using Intel GPUs.

- Install the main library:

pip install diffusers transformers accelerate gradio==3.48.0

- Launch the gradio: (For MacOS users, need to set the device="mps" in app.py; For Intel GPU users, set

device="xpu"in app.py)

python app.py

Ours Hugging Face Demo and Model are released ! Latent Consistency Models are supported in 🧨 diffusers.

LCM Model Download: LCM_Dreamshaper_v7

For Chinese users, download LCM here: (中文用户可以在此下载LCM模型)

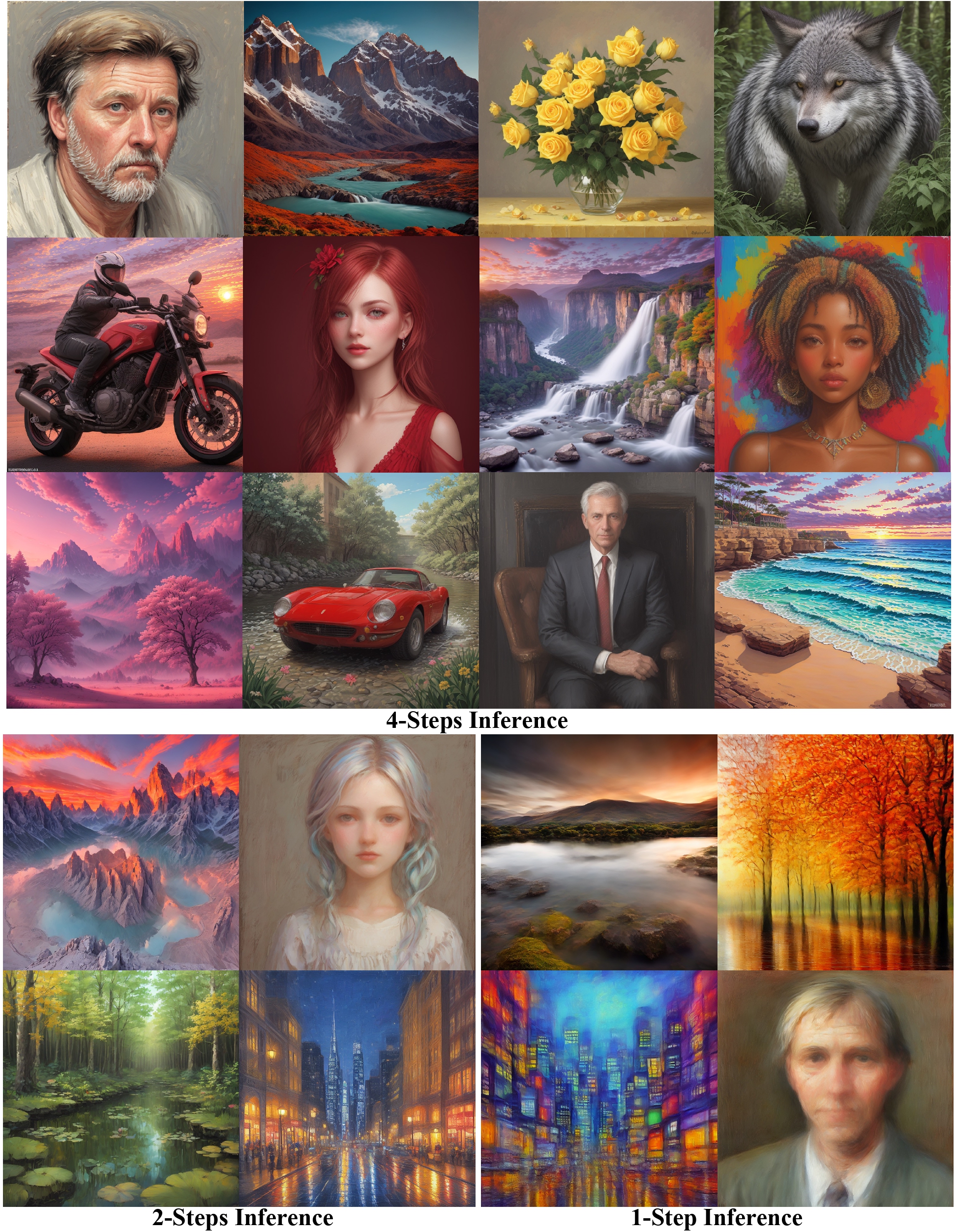

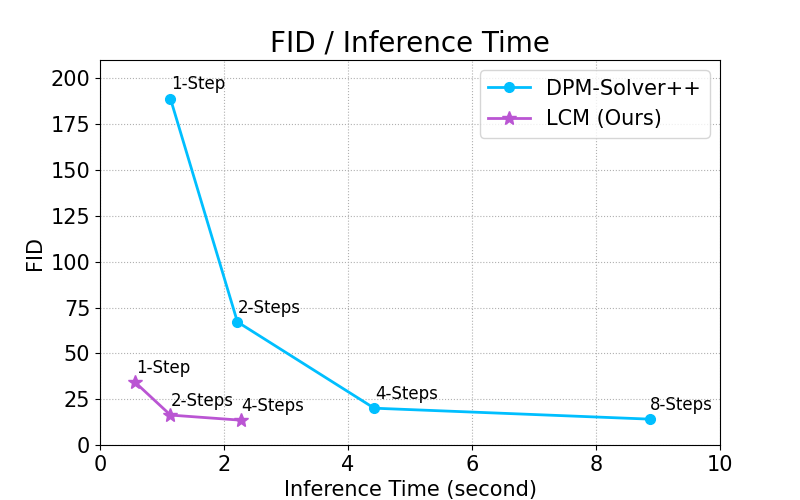

By distilling classifier-free guidance into the model's input, LCM can generate high-quality images in very short inference time. We compare the inference time at the setting of 768 x 768 resolution, CFG scale w=8, batchsize=4, using a A800 GPU.

We have official LCM Pipeline and LCM Scheduler in 🧨 Diffusers library now! The older usages will be deprecated.

You can try out Latency Consistency Models directly on:

To run the model yourself, you can leverage the 🧨 Diffusers library:

- Install the library:

pip install --upgrade diffusers # make sure to use at least diffusers >= 0.22

pip install transformers accelerate

- Run the model:

from diffusers import DiffusionPipeline

import torch

pipe = DiffusionPipeline.from_pretrained("SimianLuo/LCM_Dreamshaper_v7")

# To save GPU memory, torch.float16 can be used, but it may compromise image quality.

pipe.to(torch_device="cuda", torch_dtype=torch.float32)

prompt = "Self-portrait oil painting, a beautiful cyborg with golden hair, 8k"

# Can be set to 1~50 steps. LCM support fast inference even <= 4 steps. Recommend: 1~8 steps.

num_inference_steps = 4

images = pipe(prompt=prompt, num_inference_steps=num_inference_steps, guidance_scale=8.0, lcm_origin_steps=50, output_type="pil").imagesFor more information, please have a look at the official docs: 👉 https://huggingface.co/docs/diffusers/api/pipelines/latent_consistency_models#latent-consistency-models

We have official LCM Pipeline and LCM Scheduler in 🧨 Diffusers library now! The older usages will be deprecated. But you can still use the older usages by adding revision="fb9c5d1" from from_pretrained(...)

To run the model yourself, you can leverage the 🧨 Diffusers library:

- Install the library:

pip install diffusers transformers accelerate

- Run the model:

from diffusers import DiffusionPipeline

import torch

pipe = DiffusionPipeline.from_pretrained("SimianLuo/LCM_Dreamshaper_v7", custom_pipeline="latent_consistency_txt2img", custom_revision="main", revision="fb9c5d")

# To save GPU memory, torch.float16 can be used, but it may compromise image quality.

pipe.to(torch_device="cuda", torch_dtype=torch.float32)

prompt = "Self-portrait oil painting, a beautiful cyborg with golden hair, 8k"

# Can be set to 1~50 steps. LCM support fast inference even <= 4 steps. Recommend: 1~8 steps.

num_inference_steps = 4

images = pipe(prompt=prompt, num_inference_steps=num_inference_steps, guidance_scale=8.0, lcm_origin_steps=50, output_type="pil").imagesLCM:

@misc{luo2023latent,

title={Latent Consistency Models: Synthesizing High-Resolution Images with Few-Step Inference},

author={Simian Luo and Yiqin Tan and Longbo Huang and Jian Li and Hang Zhao},

year={2023},

eprint={2310.04378},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

LCM-LoRA:

@article{luo2023lcm,

title={LCM-LoRA: A Universal Stable-Diffusion Acceleration Module},

author={Luo, Simian and Tan, Yiqin and Patil, Suraj and Gu, Daniel and von Platen, Patrick and Passos, Apolin{\'a}rio and Huang, Longbo and Li, Jian and Zhao, Hang},

journal={arXiv preprint arXiv:2311.05556},

year={2023}

}