Dejia Xu1, Hanwen Jiang1, Zhangyang Wang1

Please check docker/README.MD

OR you can follow the steps below:

The code is tested with python 3.9, cuda == 11.3, pytorch == 1.10.1. Additionally dependencies include:

h5py

kornia

torch

torchvision

omegaconf

torchmetrics==0.10.3

fvcore

iopath

submitit

pathlib

transforms3d

numpy

plyfile

easydict

scikit-image

matplotlib

pyyaml

tabulate

numpy

tqdm

loguru

opencv-python

--extra-index-url https://pypi.nvidia.compip3 install -r ./requirements.txtdownload SegmentAnything Model to weights

wget https://dl.fbaipublicfiles.com/segment_anything/sam_vit_h_4b8939.pth -O weights/sam_vit_h_4b8939.pthdownload DINOv2 Model to weights

wget https://dl.fbaipublicfiles.com/dinov2/dinov2_vits14/dinov2_vits14_pretrain.pth -O weights/dinov2_vits14.pthPrepare datasets (Updated dataset download links)

Download datasets from the Hugging Face Website: download OnePose/OnePose_LowTexture datasets from here YCB-Video and LINEMOD dataset from here, and extract them into ./data.

- LM_dataset: https://huggingface.co/datasets/paulpanwang/POPE_Dataset/resolve/main/LM_dataset.zip

- onepose: https://huggingface.co/datasets/paulpanwang/POPE_Dataset/resolve/main/onepose.zip

- onepose_plusplus: https://huggingface.co/datasets/paulpanwang/POPE_Dataset/resolve/main/onepose_plusplus.zip

- ycbv: https://huggingface.co/datasets/paulpanwang/POPE_Dataset/resolve/main/ycbv.zip

If you want to evaluate on LINEMOD dataset, download the real training data, test data and 3D object models from CDPN, and detection results by YOLOv5 from here. Then extract them into ./data

The directory should be organized in the following structure:

|--📂data

| |--- 📂ycbv

| |--- 📂OnePose_LowTexture

| |--- 📂demos

| |--- 📂onepose

| |--- 📂LM_dataset

| | |--- 📂bbox_2d

| | |--- 📂corlor

| | |--- 📂color_full

| | |--- 📂intrin

| | |--- 📂intrin_ba

| | |--- 📂poses_ba

| | |--- 📜box3d_corners.txt

The code has been recently tidied up for release and could perhaps contain tiny bugs. Please feel free to open an issue.

bash demo.sh

# Demo1: visual DINOv2 feature

python3 visual_dinov2.py

# Demo2: visual Segment Anything Model

python3 visual_sam.py

# Demo2: visual 3D BBox

python3 visual_3dbbox.pypython3 eval_linemod_json.py

python3 eval_onepose_json.py

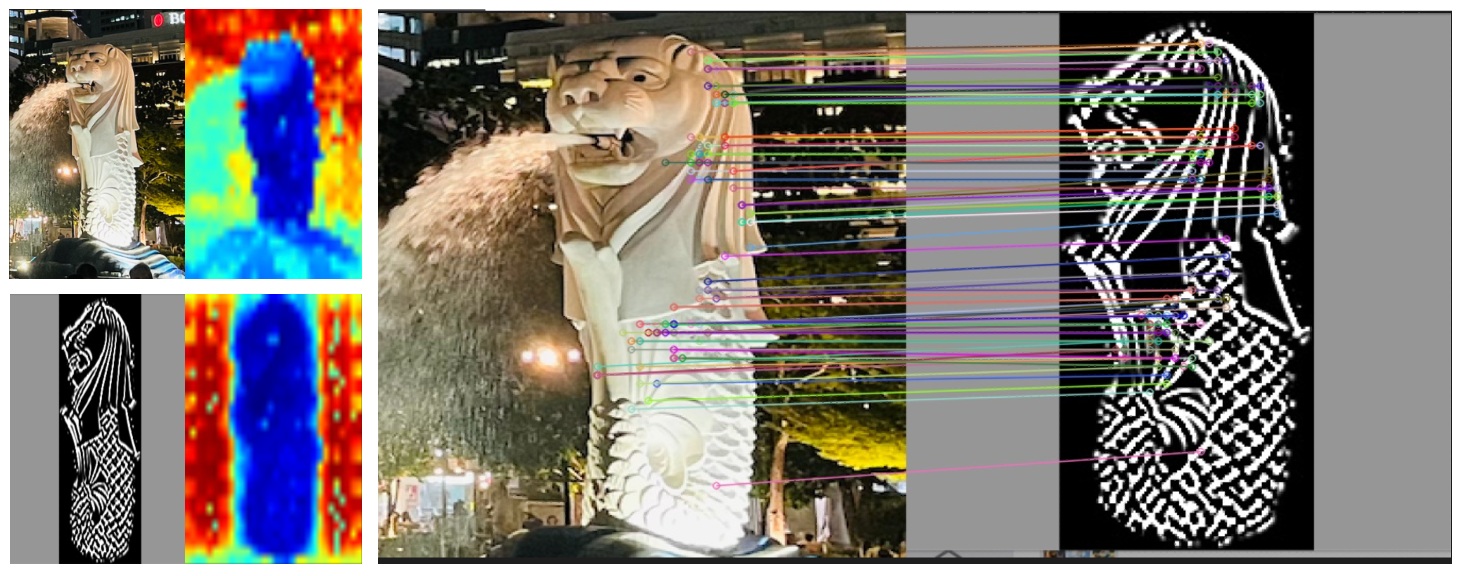

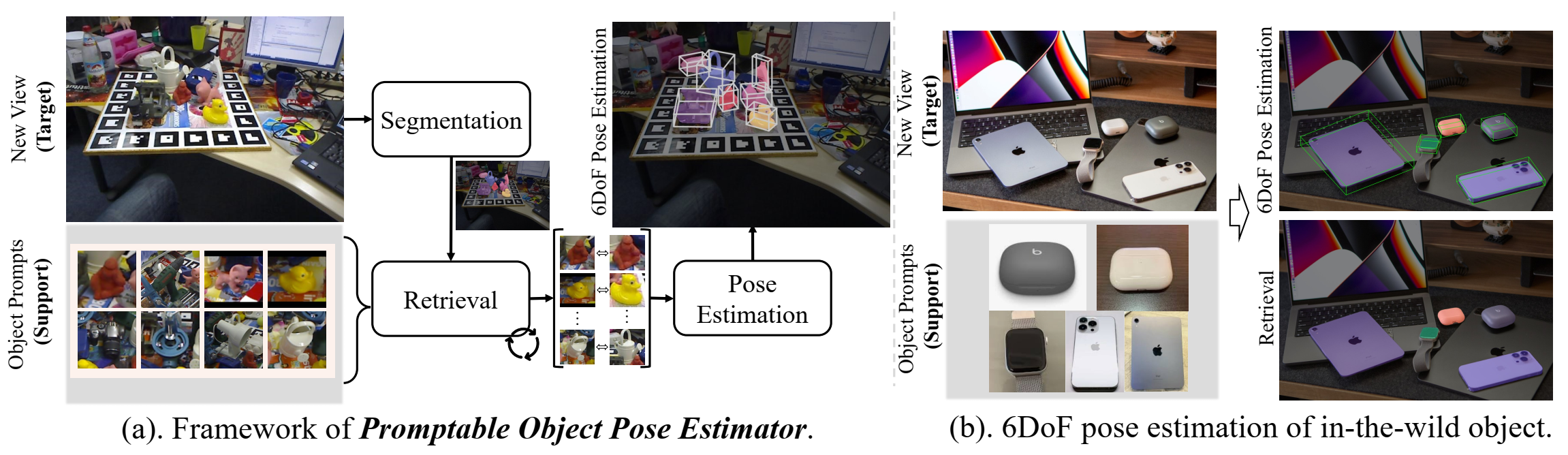

python3 eval_ycb_json.pySome Visual Examples of Promptable Object Pose Estimation Test Cases on Outdoor, indoor and scene with severe occlutions.

We also conduct a more challenging evaluation using an edge map as the reference, which further demonstrates the robustness of POPE(DINOv2 and Matcher).

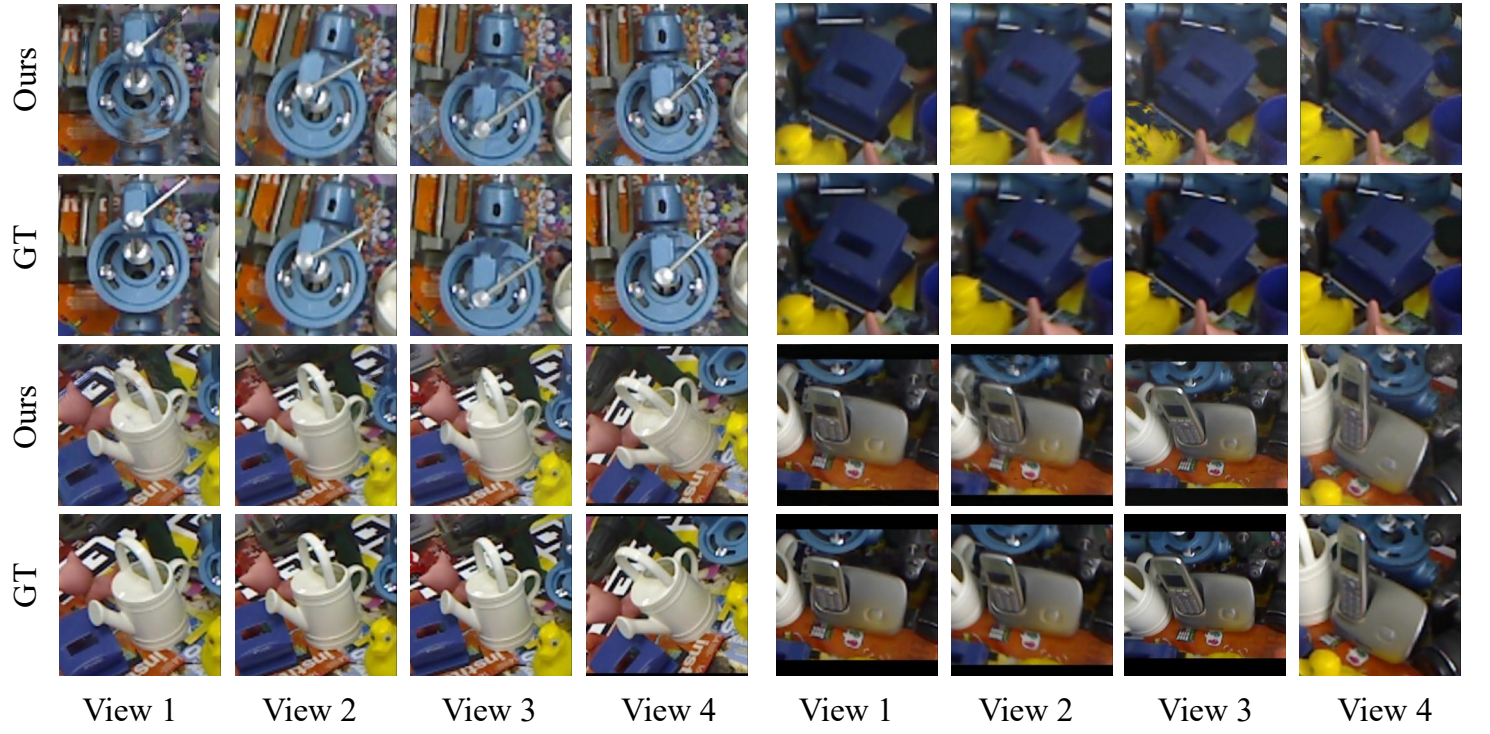

We show the Application of Novel View Synthesis, by leveraging the estimated object poses, our method generate photo-realistic rendering results. we employ the estimated multi-view poses obtained from our POPE model, in combi nation with a pre-trained and generalizable Neural Radiance Field (GNT and Render)

We show Visualizations on LINEMOD, YCB-Video, OnePose and OnePose++ datasets, with the comparison with LoFTR and Gen6D.

If you find this repo is helpful, please consider citing:

@article{fan2023pope,

title={POPE: 6-DoF Promptable Pose Estimation of Any Object, in Any Scene, with One Reference},

author={Fan, Zhiwen and Pan, Panwang and Wang, Peihao and Jiang, Yifan and Xu, Dejia and Jiang, Hanwen and Wang, Zhangyang},

journal={arXiv preprint arXiv:2305.15727},

year={2023}

}