ReHLine is designed to be a computationally efficient and practically useful software package for large-scale empirical risk minimization (ERM) problems.

The ReHLine solver has four appealing "linear properties":

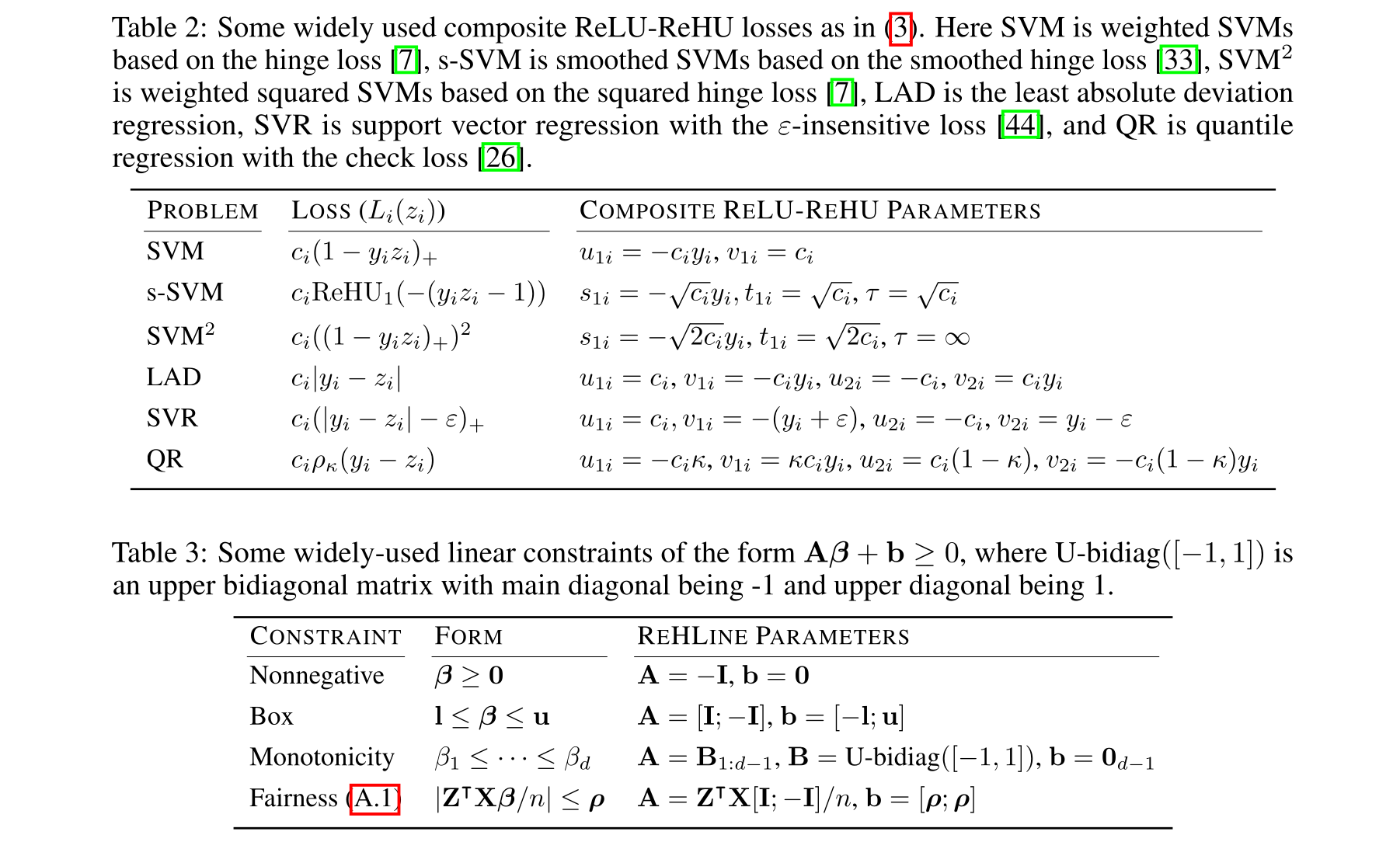

- It applies to any convex piecewise linear-quadratic loss function, including the hinge loss, the check loss, the Huber loss, etc.

- In addition, it supports linear equality and inequality constraints on the parameter vector.

- The optimization algorithm has a provable linear convergence rate.

- The per-iteration computational complexity is linear in the sample size.

Additional information can be located on the homepage of Rehline (https://rehline.github.io/).

ReHLine is designed to address the empirical regularized ReLU-ReHU minimization problem, named ReHLine optimization, of the following form:

where

This formulation has a wide range of applications spanning various fields, including statistics, machine learning, computational biology, and social studies. Some popular examples include SVMs with fairness constraints (FairSVM), elastic net regularized quantile regression (ElasticQR), and ridge regularized Huber minimization (RidgeHuber).

We provide both Python and R interfaces to the ReHLine solver, and the core algorithm is implemented in efficient C++ code.

Some existing problems of recent interest in statistics and machine learning can be solved by ReHLine, and we provide reproducible benchmark code and results at the ReHLine-benchmark repository.

| Problem | Results |

|---|---|

| SVM | Result |

| Smoothed SVM | Result |

| FairSVM | Result |

| ElasticQR | Result |

| RidgeHuber | Result |

ReHLine is open source under the MIT license.