vis_sparsedrive.mp4

24 June, 2024: We reorganize code for better readability. Code & Models are released.31 May, 2024: We release the SparseDrive paper on arXiv. Code & Models will be released in June, 2024. Please stay tuned!

SparseDrive is a Sparse-Centric paradigm for end-to-end autonomous driving.

- We explore the sparse scene representation for end-to-end autonomous driving and propose a Sparse-Centric paradigm named SparseDrive, which unifies multiple tasks with sparse instance representation.

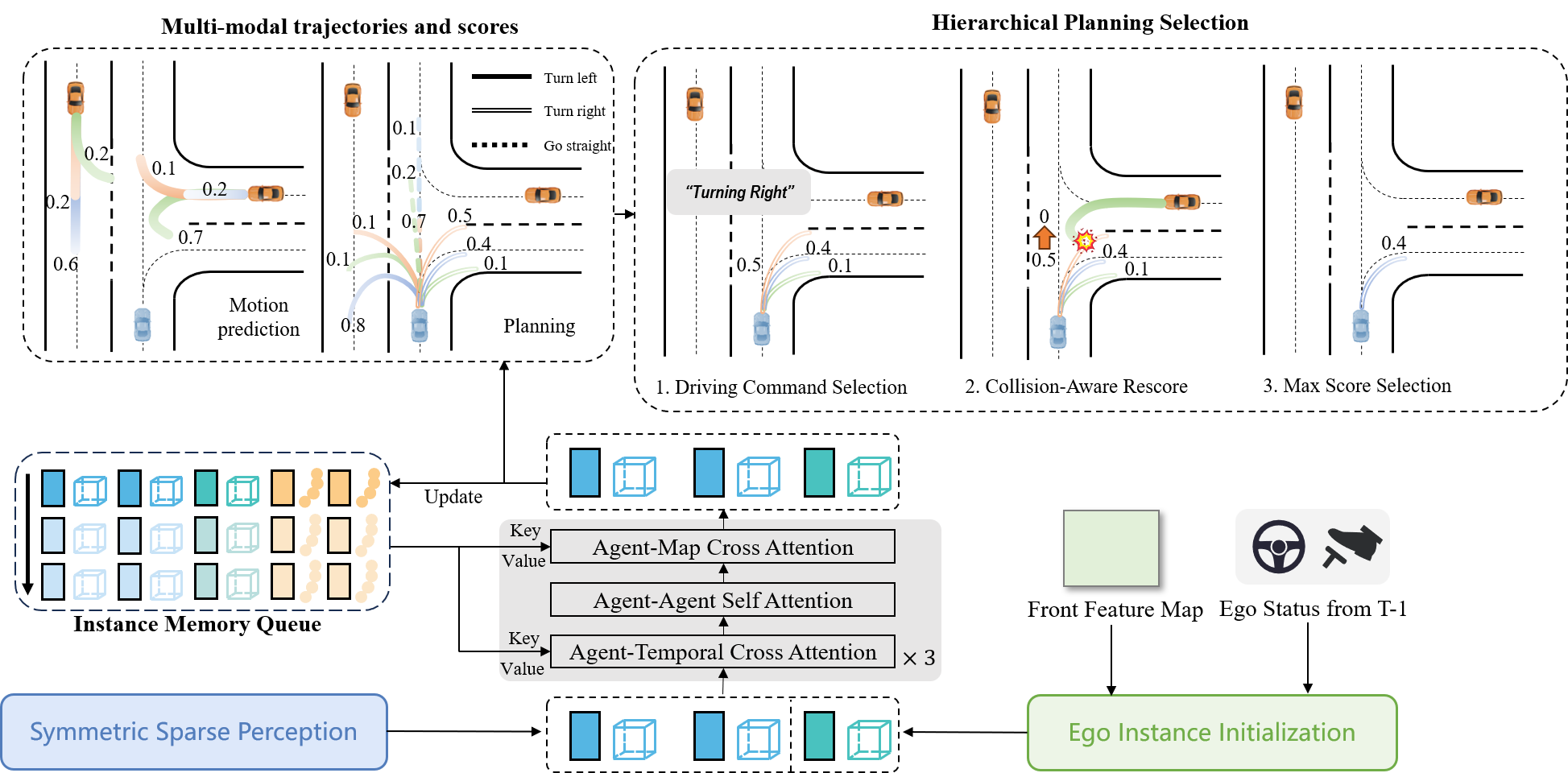

- We revise the great similarity shared between motion prediction and planning, correspondingly leading to a parallel design for motion planner. We further propose a hierarchical planning selection strategy incorporating a collision-aware rescore module to boost the planning performance.

- On the challenging nuScenes benchmark, SparseDrive surpasses previous SOTA methods in terms of all metrics, especially the safety-critical metric collision rate, while keeping much higher training and inference efficiency.

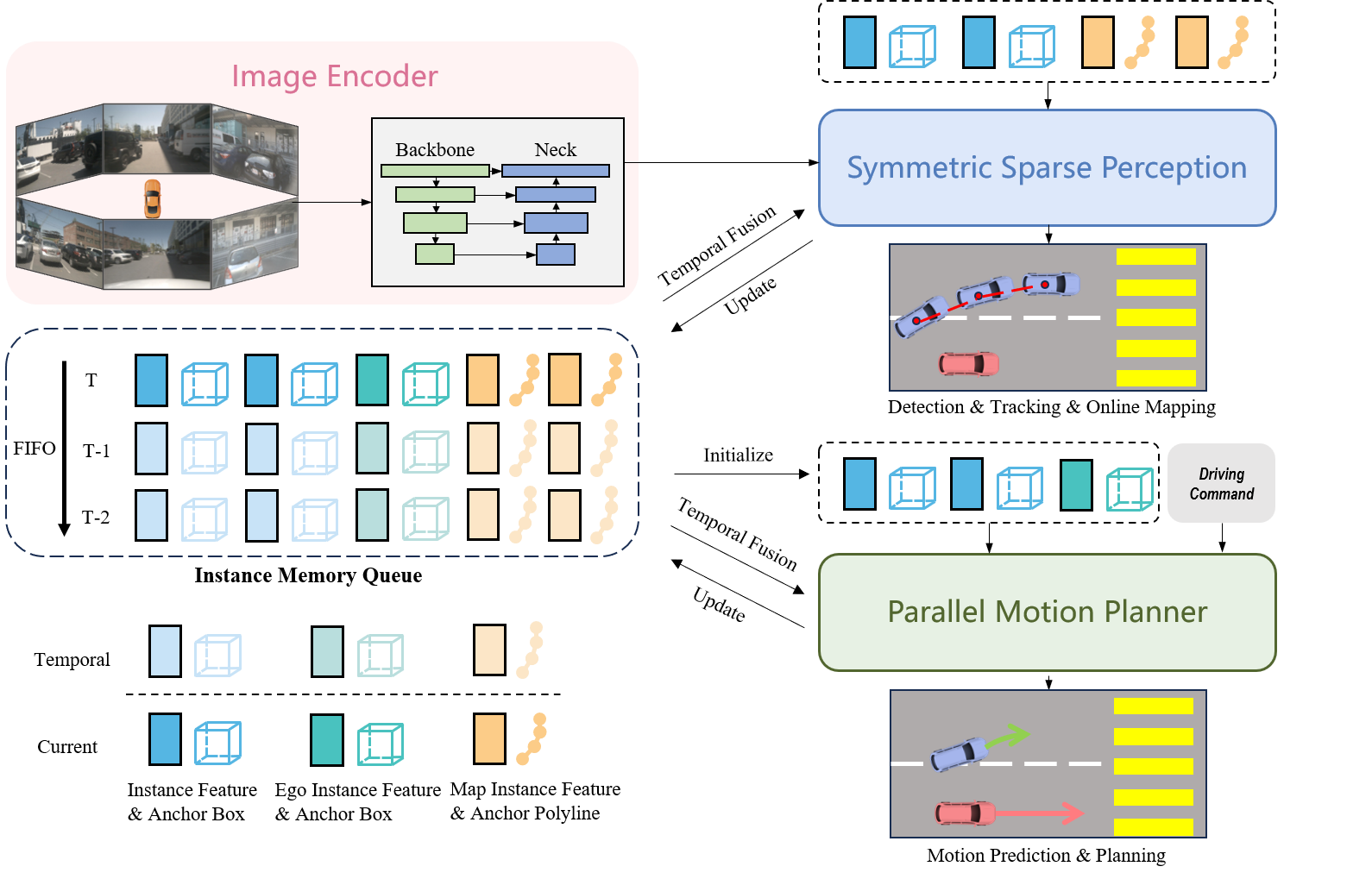

Overview of SparseDrive. SparseDrive first encodes multi-view images into feature maps,

then learns sparse scene representation through symmetric sparse perception, and finally perform

motion prediction and planning in a parallel manner. An instance memory queue is devised for

temporal modeling.

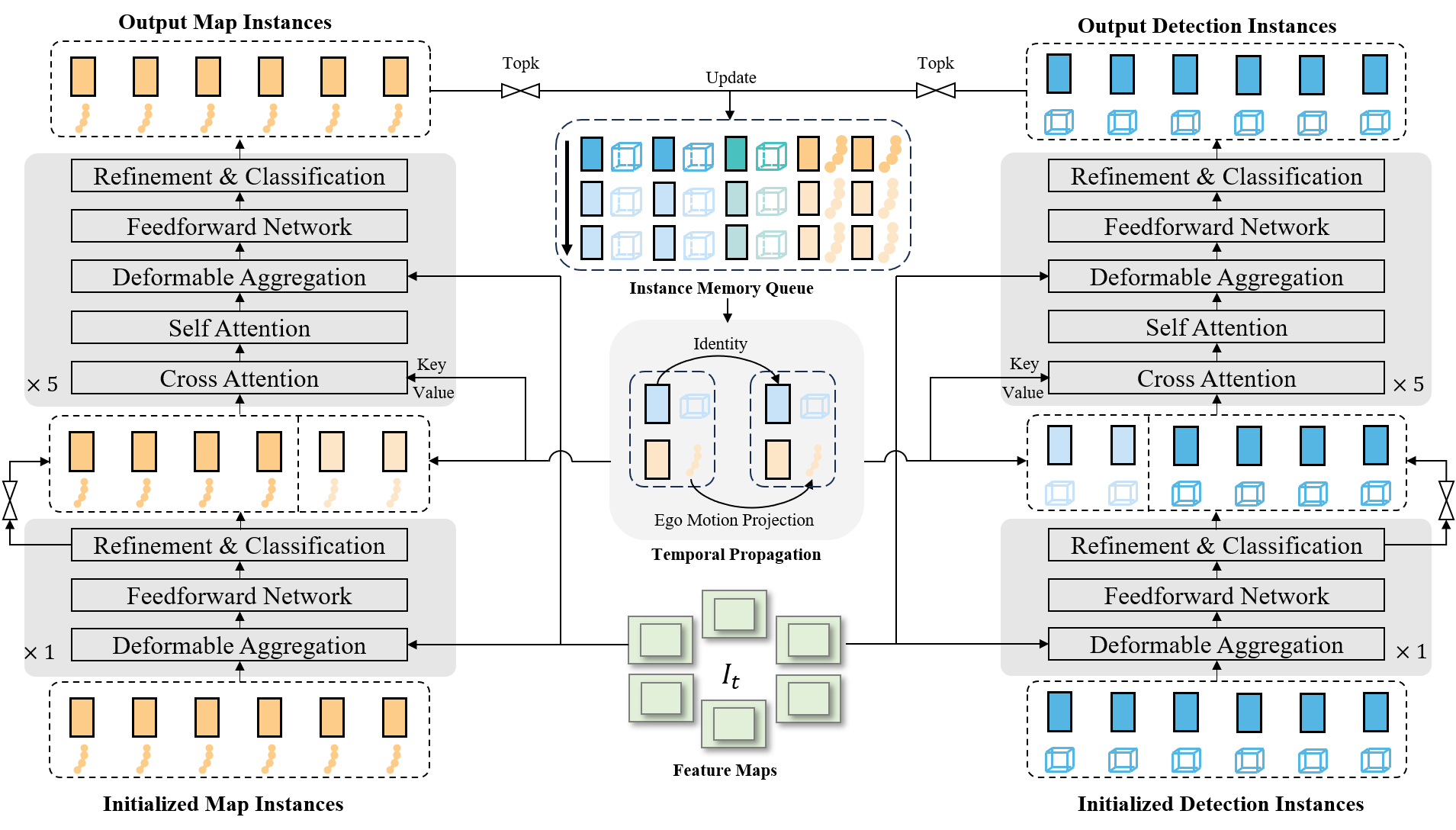

Model architecture of symmetric sparse perception, which unifies detection, tracking and

online mapping in a symmetric structure.

Model structure of parallel motion planner, which performs motion prediction and planning

simultaneously and outputs safe planning trajectory.

- Comprehensive results for all tasks on nuScenes.

| Method | NDS | AMOTA | minADE (m) | L2 (m) Avg | Col. (%) Avg | Training Time (h) | FPS |

|---|---|---|---|---|---|---|---|

| UniAD | 0.498 | 0.359 | 0.71 | 0.73 | 0.61 | 144 | 1.8 |

| SparseDrive-S | 0.525 | 0.386 | 0.62 | 0.61 | 0.08 | 20 | 9.0 |

| SparseDrive-B | 0.588 | 0.501 | 0.60 | 0.58 | 0.06 | 30 | 7.3 |

- Open-loop planning results on nuScenes.

| Method | L2 (m) 1s | L2 (m) 2s | L2 (m) 3s | L2 (m) Avg | Col. (%) 1s | Col. (%) 2s | Col. (%) 3s | Col. (%) Avg | FPS |

|---|---|---|---|---|---|---|---|---|---|

| UniAD | 0.45 | 0.70 | 1.04 | 0.73 | 0.62 | 0.58 | 0.63 | 0.61 | 1.8 |

| VAD | 0.41 | 0.70 | 1.05 | 0.72 | 0.03 | 0.19 | 0.43 | 0.21 | 4.5 |

| SparseDrive-S | 0.29 | 0.58 | 0.96 | 0.61 | 0.01 | 0.05 | 0.18 | 0.08 | 9.0 |

| SparseDrive-B | 0.29 | 0.55 | 0.91 | 0.58 | 0.01 | 0.02 | 0.13 | 0.06 | 7.3 |

We found that some collision cases were not taken into consideration in our previous code, so we re-implement the evaluation metric for collision rate in released code and provide updated results.

| Model | config | ckpt | log | det: NDS | mapping: mAP | track: AMOTA | track: AMOTP | motion: EPA_car | motion: minADE_car | motion: minFDE_car | motion: MissRate_car | planning: CR | planning: L2 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Stage1 | cfg | ckpt | log | 0.5260 | 0.5689 | 0.385 | 1.260 | ||||||

| Stage2 | cfg | ckpt | log | 0.5257 | 0.5656 | 0.372 | 1.248 | 0.492 | 0.61 | 0.95 | 0.133 | 0.097% | 0.61 |

| Method | L2 (m) 1s | L2 (m) 2s | L2 (m) 3s | L2 (m) Avg | Col. (%) 1s | Col. (%) 2s | Col. (%) 3s | Col. (%) Avg |

|---|---|---|---|---|---|---|---|---|

| UniAD | 0.45 | 0.70 | 1.04 | 0.73 | 0.66 | 0.66 | 0.72 | 0.68 |

| UniAD-wo-post-optim | 0.32 | 0.58 | 0.94 | 0.61 | 0.17 | 0.27 | 0.42 | 0.29 |

| VAD | 0.41 | 0.70 | 1.05 | 0.72 | 0.03 | 0.21 | 0.49 | 0.24 |

| SparseDrive-S | 0.30 | 0.58 | 0.95 | 0.61 | 0.01 | 0.05 | 0.23 | 0.10 |

If you find SparseDrive useful in your research or applications, please consider giving us a star 🌟 and citing it by the following BibTeX entry.

@article{sun2024sparsedrive,

title={SparseDrive: End-to-End Autonomous Driving via Sparse Scene Representation},

author={Sun, Wenchao and Lin, Xuewu and Shi, Yining and Zhang, Chuang and Wu, Haoran and Zheng, Sifa},

journal={arXiv preprint arXiv:2405.19620},

year={2024}

}