Moving beyond green screens as well as stationary, expensive and hard to use setups

Updated documentation with assembly instructions, installation guides, examples and more are now available at the project's page: https://vcl3d.github.io/VolumetricCapture/.

As volumetric capture requires the deployment of a complex system spanning multiple hardware and distributed software, please refer to the online documentation first, and then the closed issues as most problems would have been addressed there.

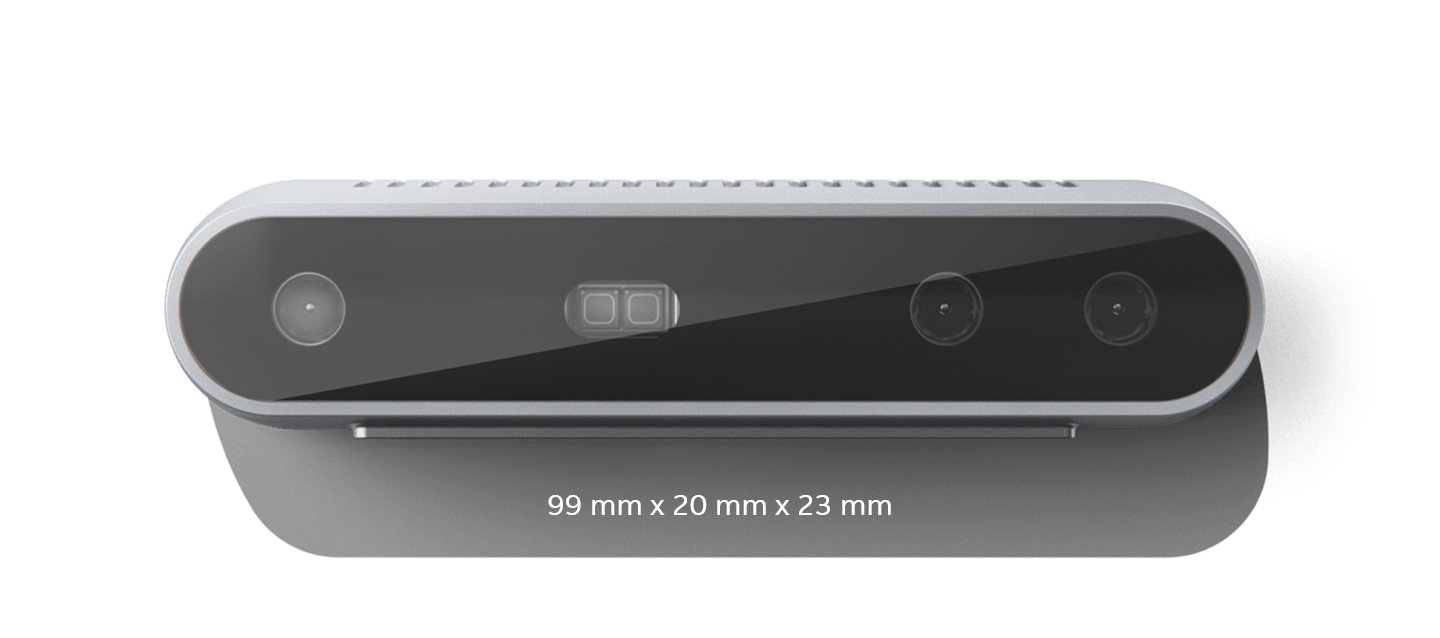

The latest release supporting both Kinect 4 Azure and Intel RealSense 2.0 D415 is now available for download with various fixes and feedback integrated. It comes with an improved multi-sensor calibration that allows for greater flexibility in terms of sensor numbers and placement and higher accuracy. More information can be found here [8].

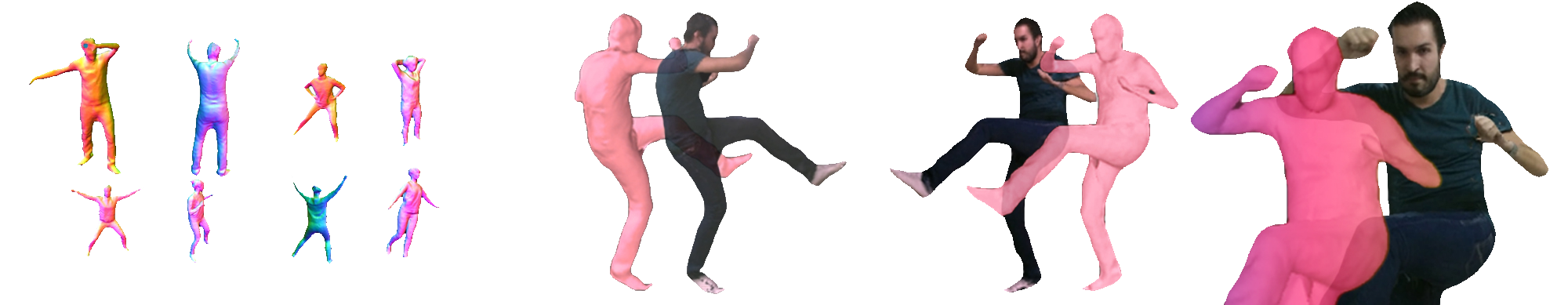

This repository contains VCL's evolving toolset for volumetric (multi-RGB-D sensor) capturing and recording, initially presented in [1]. It is a research oriented, but flexible and optimized, software with integrated multi-sensor alignment research results ([6], [8]), that can be / has been used in the context of:

- Live Tele-presence [2] in Augmented VR or Mixed/Augmented Reality settings

- Performance Capture [3]

- Free Viewpoint Video (FVV)

- Immersive Applications (i.e. events and/or gaming) [4]

- Motion Capture [5]

- Post-production [9]

- Data Collection [7], [10]

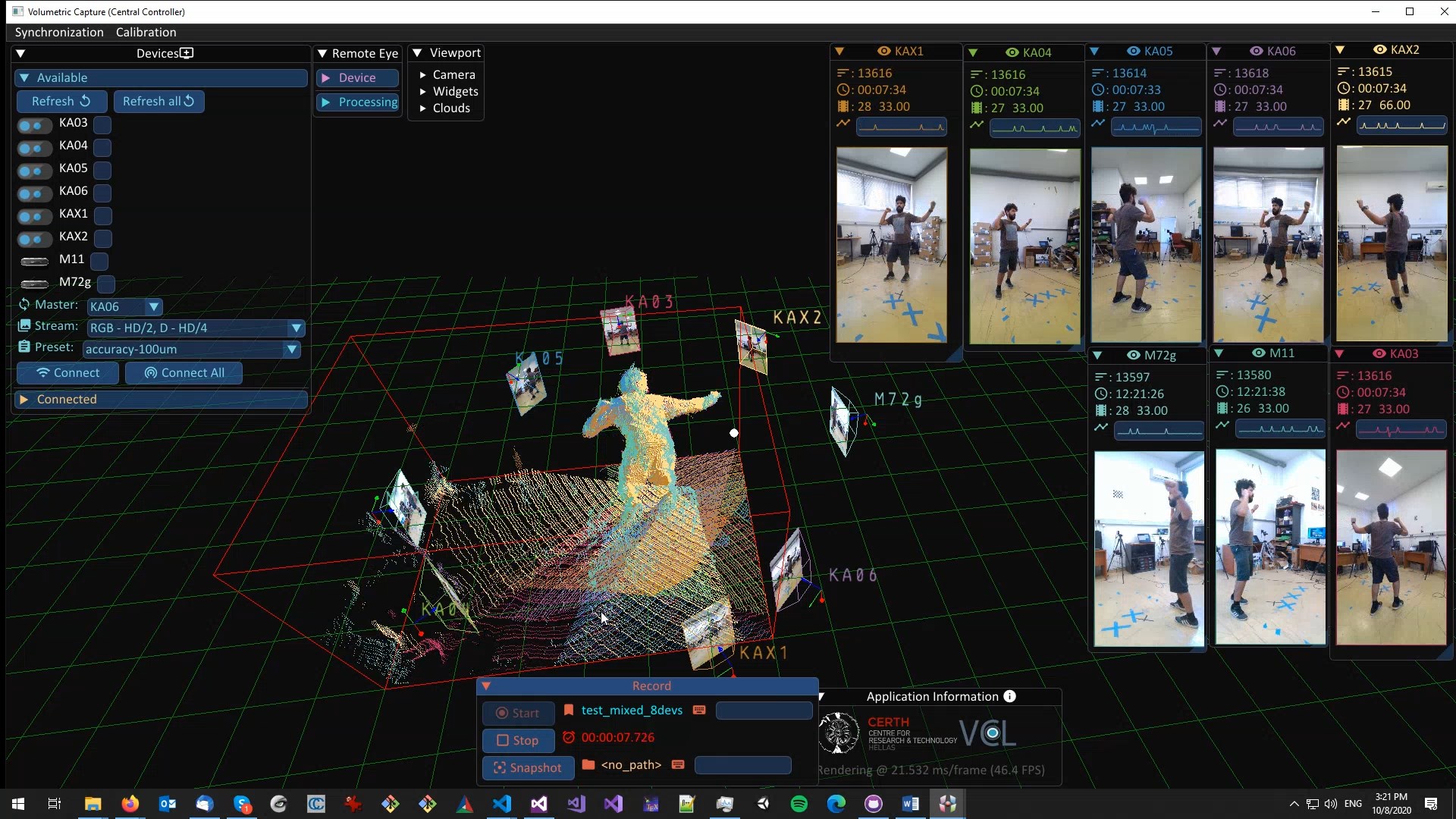

The toolset is designed as a distributed system where a number of processing units each manage and collect data from a single sensor using a headless application. A set of sensors is orchestrated by a centralized UI application that is also the delivery point of the connected sensor streams. Communication is handled by a broker, typically co-hosted with the controlling application, although not necessary.

We now support both (and mixed !) Intel RealSense D415 and Azure Kinect DK sensors.

| Intel RealSense D415 | Microsoft Kinect Azure |

|---|---|

|

- Multi-sensor streaming and recording

- Quick and easy volumetric sensor alignment

- Hardware and software (IEEE 1588 PTP) synchronization

Check our latest releases.

If you used the system or found this work useful, please cite:

@inproceedings{sterzentsenko2018low,

title={A low-cost, flexible and portable volumetric capturing system},

author={Sterzentsenko, Vladimiros and Karakottas, Antonis and Papachristou, Alexandros and Zioulis, Nikolaos and Doumanoglou, Alexandros and Zarpalas, Dimitrios and Daras, Petros},

booktitle={2018 14th International Conference on Signal-Image Technology \& Internet-Based Systems (SITIS)},

pages={200--207},

year={2018},

organization={IEEE}

}

We currently only ship binaries for the Windows platform, supporting Windows 10.

[1] Sterzentsenko, V., Karakottas, A., Papachristou, A., Zioulis, N., Doumanoglou, A., Zarpalas, D. and Daras, P., 2018, November. A low-cost, flexible and portable volumetric capturing system. In 2018 14th International Conference on Signal-Image Technology & Internet-Based Systems (SITIS) (pp. 200-207). IEEE.

[2] Alexiadis, D.S., Chatzitofis, A., Zioulis, N., Zoidi, O., Louizis, G., Zarpalas, D. and Daras, P., 2016. An integrated platform for live 3D human reconstruction and motion capturing. IEEE Transactions on Circuits and Systems for Video Technology (TCSVT), 27(4), pp.798-813.

[3] Alexiadis, D.S., Zioulis, N., Zarpalas, D. and Daras, P., 2018. Fast deformable model-based human performance capture and FVV using consumer-grade RGB-D sensors. Pattern Recognition (PR), 79, pp.260-278.

[4] Zioulis, N., Alexiadis, D., Doumanoglou, A., Louizis, G., Apostolakis, K., Zarpalas, D. and Daras, P., 2016, September. 3D tele-immersion platform for interactive immersive experiences between remote users. In 2016 IEEE International Conference on Image Processing (ICIP) (pp. 365-369). IEEE.

[5] Chatzitofis, A., Zarpalas, D., Kollias, S. and Daras, P., 2019. DeepMoCap: Deep Optical Motion Capture Using Multiple Depth Sensors and Retro-Reflectors. Sensors, 19(2), p.282.

[6] Papachristou, A., Zioulis, N., Zarpalas, D., and Daras, P., 2018. Markerless structure-based multi-sensor calibration for free viewpoint video capture, International Conference on Computer Graphics, Visualization and Computer Vision (WSCG).

[7] Sterzentsenko V., Saroglou L., Chatzitofis A., Thermos S., Zioulis N., Doumanoglou A., Zarpalas D., Daras P., 2019. Self-Supervised Deep Depth Denoising, International Conference on Computer Vision (ICCV)

[8] Sterzentsenko V., Doumanoglou, A., Thermos S., Zioulis N., Zarpalas D., Daras P., 2020. Deep Soft Procrustes for Markerless Volumetric Sensor Alignment, IEEE Conference on Virtual Reality and 3D User Interfaces (VR)

[9] Karakottas, A., Zioulis, N., Doumanglou, A., Sterzentsenko, V., Gkitsas, V., Zarpalas, D. and Daras, P., 2020, July. XR360: A Toolkit for Mixed 360 and 3d Productions. In 2020 IEEE International Conference on Multimedia & Expo Workshops (ICMEW) (pp. 1-6). IEEE.

[10] Chatzitofis A., Saroglou, L., Boutis P., Drakoulis P., Zioulis N., Subramanyam S., Kevelham B., Charbonnier C., Cesar P., Zarpalas D., Kollias S., Daras P., 2020. HUMAN4D: A Human-Centric Multimodal Dataset for Motions & Immersive Media, IEEE Access Journal