This is the project for the second course in the Udacity Self-Driving Car Engineer Nanodegree Program : Sensor Fusion and Tracking.

In this project, you'll fuse measurements from LiDAR and camera and track vehicles over time. You will be using real-world data from the Waymo Open Dataset, detect objects in 3D point clouds and apply an extended Kalman filter for sensor fusion and tracking.

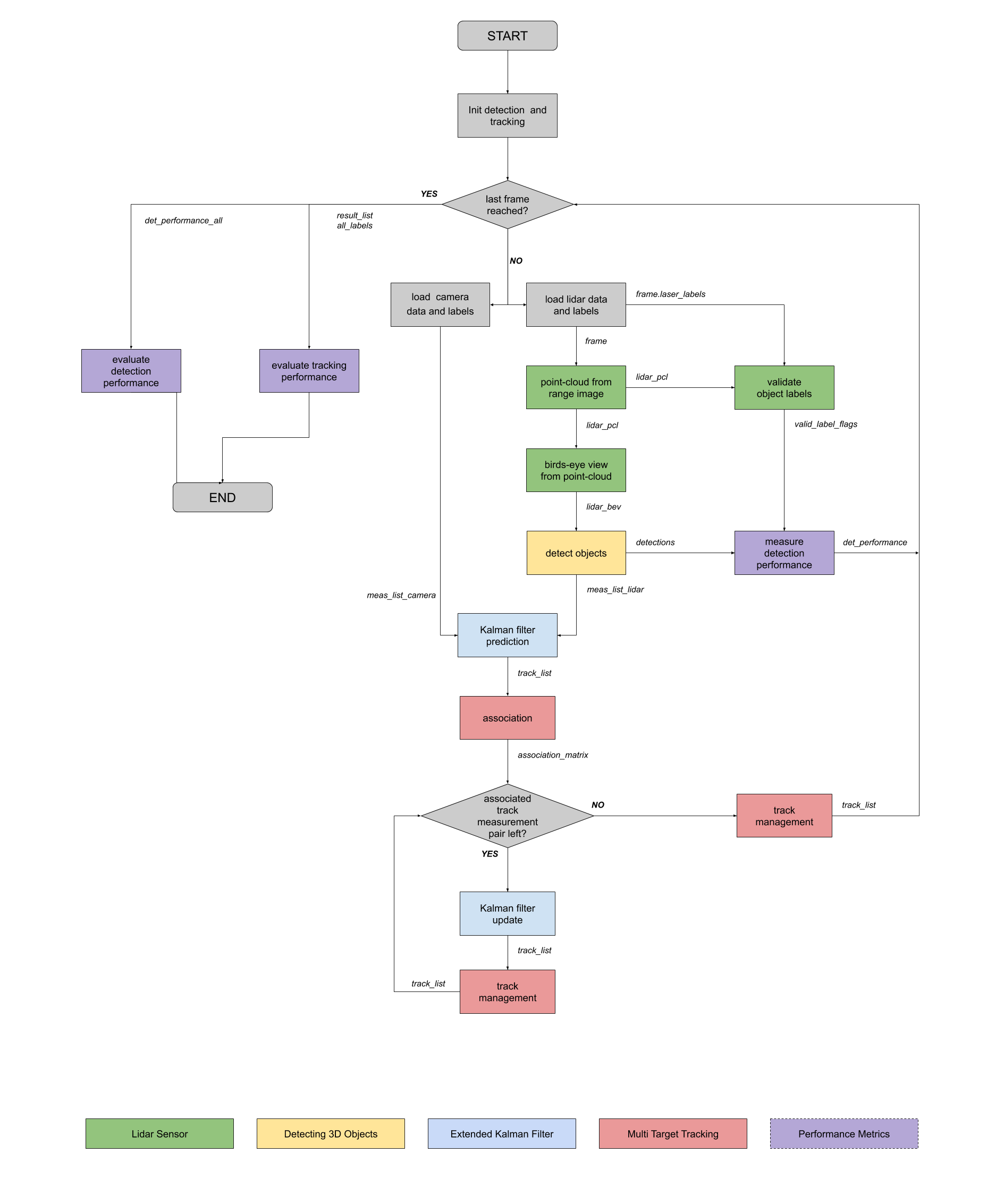

The project consists of two major parts:

- Object detection: In this part, a deep-learning approach is used to detect vehicles in LiDAR data based on a birds-eye view perspective of the 3D point-cloud. Also, a series of performance measures is used to evaluate the performance of the detection approach.

- Object tracking : In this part, an extended Kalman filter is used to track vehicles over time, based on the lidar detections fused with camera detections. Data association and track management are implemented as well.

The following diagram contains an outline of the data flow and of the individual steps that make up the algorithm.

Also, the project code contains various tasks, which are detailed step-by-step in the code. More information on the algorithm and on the tasks can be found in the Udacity classroom.

📦project

┣ 📂dataset --> contains the Waymo Open Dataset sequences

┃

┣ 📂misc

┃ ┣ evaluation.py --> plot functions for tracking visualization and RMSE calculation

┃ ┣ helpers.py --> misc. helper functions, e.g. for loading / saving binary files

┃ ┗ objdet_tools.py --> object detection functions without student tasks

┃ ┗ params.py --> parameter file for the tracking part

┃

┣ 📂results --> binary files with pre-computed intermediate results

┃

┣ 📂student

┃ ┣ association.py --> data association logic for assigning measurements to tracks incl. student tasks

┃ ┣ filter.py --> extended Kalman filter implementation incl. student tasks

┃ ┣ measurements.py --> sensor and measurement classes for camera and lidar incl. student tasks

┃ ┣ objdet_detect.py --> model-based object detection incl. student tasks

┃ ┣ objdet_eval.py --> performance assessment for object detection incl. student tasks

┃ ┣ objdet_pcl.py --> point-cloud functions, e.g. for birds-eye view incl. student tasks

┃ ┗ trackmanagement.py --> track and track management classes incl. student tasks

┃

┣ 📂tools --> external tools

┃ ┣ 📂objdet_models --> models for object detection

┃ ┃ ┃

┃ ┃ ┣ 📂darknet

┃ ┃ ┃ ┣ 📂config

┃ ┃ ┃ ┣ 📂models --> darknet / yolo model class and tools

┃ ┃ ┃ ┣ 📂pretrained --> copy pre-trained model file here

┃ ┃ ┃ ┃ ┗ complex_yolov4_mse_loss.pth

┃ ┃ ┃ ┣ 📂utils --> various helper functions

┃ ┃ ┃

┃ ┃ ┗ 📂resnet

┃ ┃ ┃ ┣ 📂models --> fpn_resnet model class and tools

┃ ┃ ┃ ┣ 📂pretrained --> copy pre-trained model file here

┃ ┃ ┃ ┃ ┗ fpn_resnet_18_epoch_300.pth

┃ ┃ ┃ ┣ 📂utils --> various helper functions

┃ ┃ ┃

┃ ┗ 📂waymo_reader --> functions for light-weight loading of Waymo sequences

┃

┣ basic_loop.py

┣ loop_over_dataset.py

In order to create a local copy of the project, please click on "Code" and then "Download ZIP". Alternatively, you may of-course use GitHub Desktop or Git Bash for this purpose.

The project has been written using Python 3.7. Please make sure that your local installation is equal or above this version.

All dependencies required for the project have been listed in the file requirements.txt. You may either install them one-by-one using pip or you can use the following command to install them all at once:

pip3 install -r requirements.txt

The Waymo Open Dataset Reader is a very convenient toolbox that allows you to access sequences from the Waymo Open Dataset without the need of installing all of the heavy-weight dependencies that come along with the official toolbox. The installation instructions can be found in tools/waymo_reader/README.md.

This project makes use of three different sequences to illustrate the concepts of object detection and tracking. These are:

- Sequence 1 :

training_segment-1005081002024129653_5313_150_5333_150_with_camera_labels.tfrecord - Sequence 2 :

training_segment-10072231702153043603_5725_000_5745_000_with_camera_labels.tfrecord - Sequence 3 :

training_segment-10963653239323173269_1924_000_1944_000_with_camera_labels.tfrecord

To download these files, you will have to register with Waymo Open Dataset first: Open Dataset – Waymo, if you have not already, making sure to note "Udacity" as your institution.

Once you have done so, please click here to access the Google Cloud Container that holds all the sequences. Once you have been cleared for access by Waymo (which might take up to 48 hours), you can download the individual sequences.

The sequences listed above can be found in the folder "training". Please download them and put the tfrecord-files into the dataset folder of this project.

The object detection methods used in this project use pre-trained models which have been provided by the original authors. They can be downloaded here (darknet) and here (fpn_resnet). Once downloaded, please copy the model files into the paths /tools/objdet_models/darknet/pretrained and /tools/objdet_models/fpn_resnet/pretrained respectively.

In the main file loop_over_dataset.py, you can choose which steps of the algorithm should be executed. If you want to call a specific function, you simply need to add the corresponding string literal to one of the following lists:

-

exec_data: controls the execution of steps related to sensor data.pcl_from_rangeimagetransforms the Waymo Open Data range image into a 3D point-cloudload_imagereturns the image of the front camera

-

exec_detection: controls which steps of model-based 3D object detection are performedbev_from_pcltransforms the point-cloud into a fixed-size birds-eye view perspectivedetect_objectsexecutes the actual detection and returns a set of objects (only vehicles)validate_object_labelsdecides which ground-truth labels should be considered (e.g. based on difficulty or visibility)measure_detection_performancecontains methods to evaluate detection performance for a single frame

In case you do not include a specific step into the list, pre-computed binary files will be loaded instead. This enables you to run the algorithm and look at the results even without having implemented anything yet. The pre-computed results for the mid-term project need to be loaded using this link. Please use the folder darknet first. Unzip the file within and put its content into the folder results.

-

exec_tracking: controls the execution of the object tracking algorithm -

exec_visualization: controls the visualization of resultsshow_range_imagedisplays two LiDAR range image channels (range and intensity)show_labels_in_imageprojects ground-truth boxes into the front camera imageshow_objects_and_labels_in_bevprojects detected objects and label boxes into the birds-eye viewshow_objects_in_bev_labels_in_cameradisplays a stacked view with labels inside the camera image on top and the birds-eye view with detected objects on the bottomshow_tracksdisplays the tracking resultsshow_detection_performancedisplays the performance evaluation based on all detectedmake_tracking_movierenders an output movie of the object tracking results

Even without solving any of the tasks, the project code can be executed.

The final project uses pre-computed lidar detections in order for all students to have the same input data. If you use the workspace, the data is prepared there already. Otherwise, download the pre-computed lidar detections (~1 GB), unzip them and put them in the folder results.

Parts of this project are based on the following repositories:

- Simple Waymo Open Dataset Reader

- Super Fast and Accurate 3D Object Detection based on 3D LiDAR Point Clouds

- Complex-YOLO: Real-time 3D Object Detection on Point Clouds

This task is about extracting two of the data channels within the range image, which are "range" and "intensity", and convert the floating-point data to an 8-bit integer value range. Later crop range image to +/- 90 deg. left and right of the forward-facing x-axis then stack cropped range and intensity image vertically and visualize the result. Following result is shown below

The goal of this task is to use the Open3D library to display the lidar point-cloud in a 3d viewer in order to develop a feel for the nature of lidar point-clouds. Below the are few results of this exercise.

From the above images it can be clearly observed that the dominant parts that appear in the LIDAR point cloud are tail lamps, bumper, front light. Sometimes when the cars are at an angle side mirrors also appear clearly.

In first step is to create a birds-eye view (BEV) perspective of the lidar point-cloud. Based on the (x,y)-coordinates in sensor space, respective coordinates within the BEV coordinate space are computed the the actual BEV map can be filled with lidar data from the point-cloud. once this task is done the respestice height and intensity map of the same is computed as well, which are shown below

Height Map

Intensity Map

The goal of this task is to illustrate how a new model can be integrated into an existing framework. for this following steps are followed:

- The fpn_resnet is instantiated by adding configs from cloned repository

- 3D bounding boxes are extracted from the results

- Output of the model is made to give out bounding box format [class-id, x, y, z, h, w, l, yaw]

Output of the above task is as shown below:

The goal of this task is to find pairings between ground-truth labels and detections, so that we can determine if an object has been (a) missed (false negative), (b) successfully detected (true positive) or (c) has been falsely reported (false positive). For this geometrical overlap is computed between the bounding boxes of labels and detected objects and determine the percentage of this overlap in relation to the area of the bounding boxes. For multiple matches objects/detections pair with maximum IOU are kept, later false negatives and false positives are computed to calculate precision and recall. After processing all the frames of a sequence, the performance of the object detection algorithm is evaluated.

precision = 0.9506578947368421, recall = 0.9444444444444444

To make sure that the code produces plausible results, the flag configs_det.use_labels_as_objects should be set to True in a second run and this should produce precision = 1.0, recall = 1.0, as labels are evaluated against themselves

The focus of this section was to implement predict and measurement update functions which form the building blocks of the extended Kalman filter. Predict function requires a motion model function F() and a process estimation function Q(). F() is modeled with an assumption of constant velocity process in 3D and corresponding process noise covariance matrix Q() depending on the current timestep dt.

Similarly in the measurement update function, functions such as post-fit residual function gamma() and pre-fit residual covariance matrix S() are implemented.

Here the main task is to update and monitor the parameters that decide wheater track is to be affirmed or deleted. At first the x and P paramters are initialized with resultant x and P obtained from previous EKF function.

Initially if the track are unassigned the score for the track is reduced and checked for position uncertainty, if the uncertainity is higher the track is deleted. Later for the assigned track the score is increased and if the score increases above the pre-defined threshold (0.6) the track is set as confirmed.

Tasks implemented till now are capable of managing a single track but to accommodate multi-target tracking, data association needs to be implemented. In the data association task, at first Mahalanobis distance(MHD) is calculated, and later gating function is implemented which uses MHD to remove the false positives being associated with the actual tracks. once we have eliminated the false positives association matrix is built which consists of tracks and measurements with respective MHD values. finally, the tracks are associated with the closest ones.

Till now the only LIDAR data was used for tracking, in this task the camera detections are fused as well to compute decisions on tracks. At first in_fov() function is implemented to see if the detections lie withi9ng the camera field of view. Projecting a 3D point to 2D image space leads to a non-linear measurement function h(x), which is computed and later on respective corrections are made to measurement vector z and measurement noise covariance matrix R.

multi target tracking was the challenging part of this project, the complexity of the system started increasing as we had to go through multiple thresholding to associate and disassociate tracks. Below shown is the resulting tracking video which includes all 4 steps mentioned above.

my_tracking_result_Trim.mp4

sensor fusion aims to overcome the limitations of individual sensors by gathering and fusing data from multiple sensors to produce more reliable information with less uncertainty. In our case there 2 sensor LIDAR and camera.

Camera are very good at behavioural analysis like for traffic signal or sign detection, pedestrian crossing, lane segmentation etc.,. But it is highly dependent on the lighting and weather condition. LIDAR is very good at the perception tasks like object detection, range estimation for collision avoidance etc.,. But it is highly prone to errors that may occur due to vibrations and also it requires lot of calibration.

With the help of sensor fusion system becomes more reliable as multiple sensor with different capabilities are used which can compensate for drawbacks of each other.

Although the sensor fusion increases the reliabilty of the system, it adds to entropy of the system as well. More the sensors, more is the entropy.

- It is unusual to expect an ambient weather and lighting conditions, these factors highly influence the measurements made by sensors which affects the results adversely.

- Process and measurement noise variance are assumned to be constant in our case but it is not so in the real world, so this factor has to be compensated.

- Multiple sensor working at same time generates a lot of data which are redundant in nature, this may create a bottle neck situation in the input pipeline.

- As seen in our project there will be few flase positive detection, failing to correctly classify one might lead to unwanted braking.

- Implement a more advanced data association, e.g. Global Nearest Neighbor (GNN) or Joint Probabilistic Data Association (JPDA)

- Adapt the Kalman filter to also estimate the object's width, length, and height, instead of simply using the unfiltered lidar detections as we did.

- Fine-tune the parameterization and see how low an RMSE can be achieved. One idea would be to apply the standard deviation values for lidar that found in the mid-term project.