AirVO

An Illumination-Robust Point-Line Visual Odometry

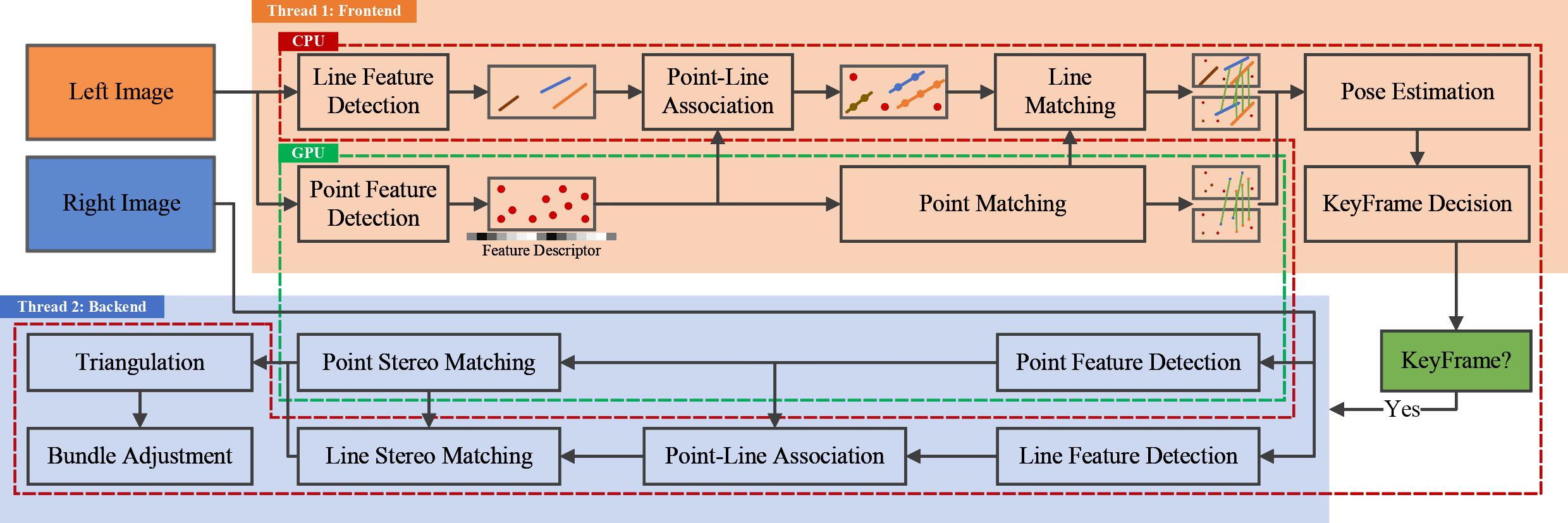

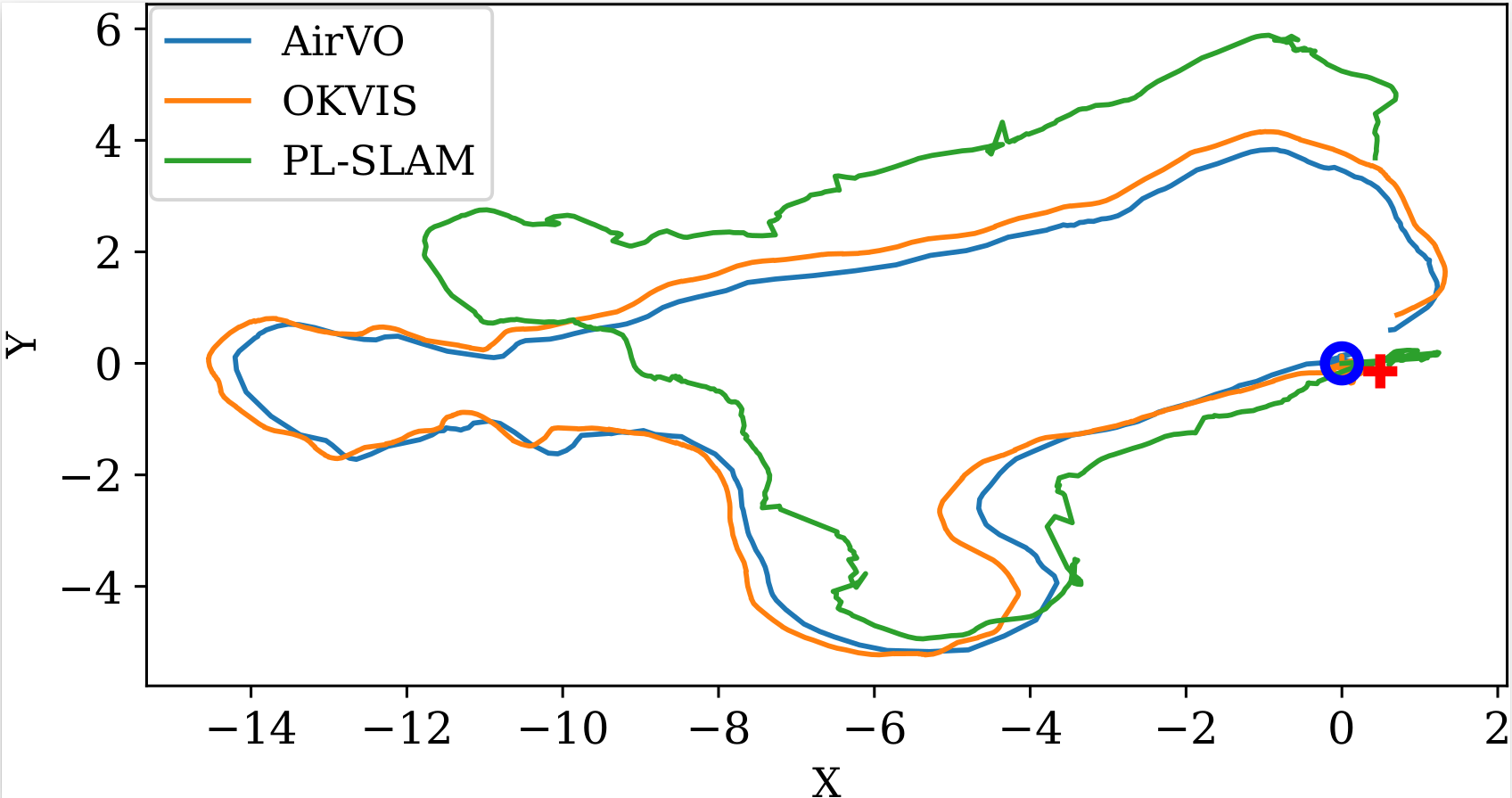

AirVO is an illumination-robust and accurate stereo visual odometry system based on point and line features. To be robust to illumination variation, we introduce the learning-based feature extraction (SuperPoint) and matching (SuperGlue) method and design a novel VO pipeline, including feature tracking, triangulation, key-frame selection, and graph optimization etc. We also employ long line features in the environment to improve the accuracy of the system. Different from the traditional line processing pipelines in visual odometry systems, we propose an illumination-robust line tracking method, where point feature tracking and distribution of point and line features are utilized to match lines. By accelerating the feature extraction and matching network using Nvidia TensorRT Toolkit, AirVO can run in real time on GPU.

Authors: Kuan Xu, Yuefan Hao, Chen Wang, and Lihua Xie

Related Papers

AirVO: An Illumination-Robust Point-Line Visual Odometry, Kuan Xu, Yuefan Hao, Chen Wang and Lihua Xie, arXiv preprint arXiv:2212.07595, 2022. PDF.

If you use AirVO, please cite:

@article{xu2022airvo,

title={AirVO: An Illumination-Robust Point-Line Visual Odometry},

author={Xu, Kuan and Hao, Yuefan and Wang, Chen and Xie, Lihua},

journal={arXiv preprint arXiv:2212.07595},

year={2022}

}Demos

UMA-VI dataset

UMA-VI dataset contains many sequences where images may suddenly darken as a result of turning off the lights. Here are demos on two sequences.

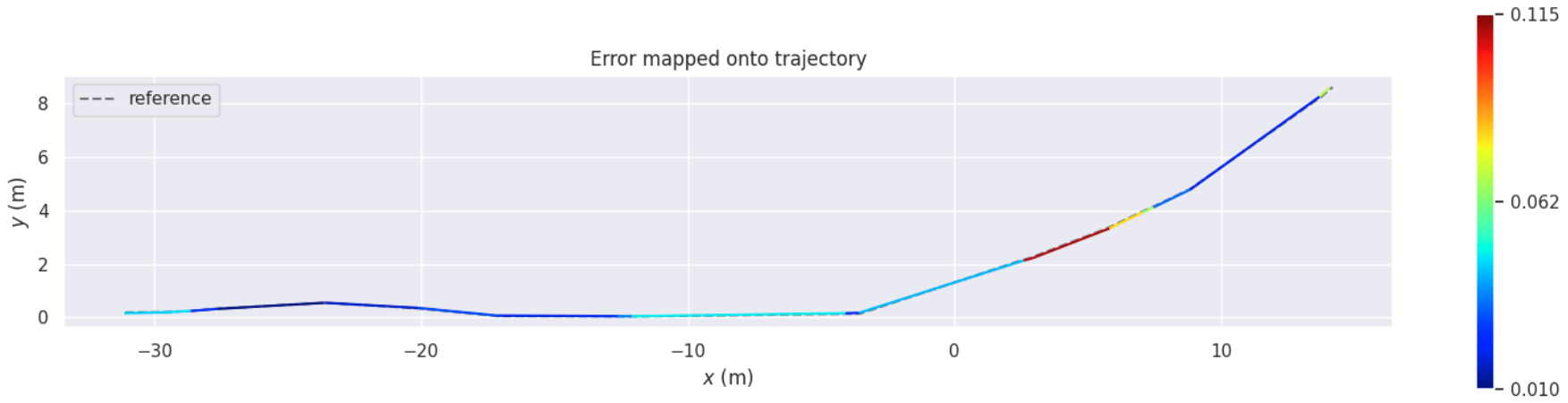

OIVIO dataset

OIVIO dataset collects data in mines and tunnels with onboard illumination.

Live demo with realsense camera

We also test AirVO on sequences collected by Realsense D435I in the environment with continuous changing illumination.

More

Test Environment

Dependencies

- OpenCV 4.2

- Eigen 3

- G2O

- TensorRT 8.4

- CUDA 11.6

- python

- onnx

- ROS noetic

- Boost

- Glog

Docker (Recommend)

docker pull xukuanhit/air_slam:v1

docker run -it --env DISPLAY=$DISPLAY --volume /tmp/.X11-unix:/tmp/.X11-unix --privileged --runtime nvidia --gpus all --volume ${PWD}:/workspace --workdir /workspace --name air_slam xukuanhit/air_slam:v1 /bin/bashData

The data should be organized using the Automous Systems Lab (ASL) dataset format just like the following:

dataroot

├── cam0

│ └── data

│ ├── 00001.jpg

│ ├── 00002.jpg

│ ├── 00003.jpg

│ └── ......

└── cam1

└── data

├── 00001.jpg

├── 00002.jpg

├── 00003.jpg

└── ......

Build

cd ~/catkin_ws/src

git clone https://github.com/xukuanHIT/AirVO.git

cd ../

catkin_make

source ~/catkin_ws/devel/setup.bash

Run

OIVIO Dataset

roslaunch air_vo oivio.launch

UMA-VI Dataset

roslaunch air_vo uma_bumblebee_indoor.launch

Euroc Dataset

roslaunch air_vo euroc.launch

Acknowledgements

We would like to thank SuperPoint and SuperGlue for making their project public.